ngordnet — Semantic Evolution Explorer

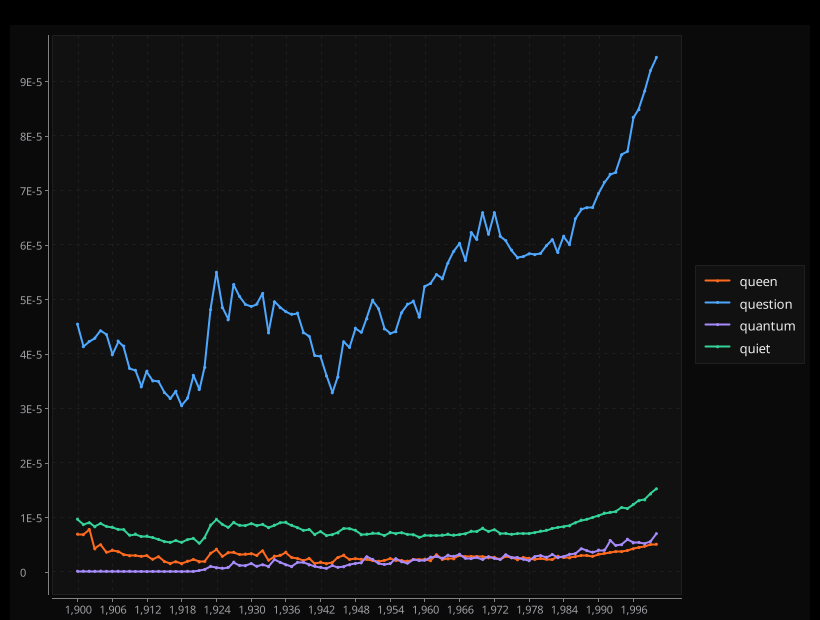

Interactive tool fusing WordNet's semantic graph with Google Books NGram data to explore how language evolves — query 'transportation' and watch centuries of linguistic change unfold.

The Vision: Language evolves constantly — new words emerge, old words fade, meanings shift across decades. How can we explore these patterns systematically? By combining WordNet’s semantic structure with Google Books’ temporal data into an interactive exploration tool.

The Challenge: Merge two massive, heterogeneous datasets (semantic graphs + time series) into a responsive web application that reveals linguistic insights through real-time queries. Handle complex graph traversals and time-series joins while maintaining sub-second response times.

Data Integration Architecture

WordNet Semantic Graph Processing

WordNet encodes the “is-a” hierarchy of English as a directed acyclic graph. Every concept (synset) connects to more general concepts via hypernym links, creating a taxonomic structure.

Graph Loading Strategy: Parse WordNet’s complex file format into optimized in-memory representation using hash maps for word-to-synset lookup, adjacency lists for hyponym relationships, and efficient synset-to-word mappings.

Complex Semantic Relationships: WordNet contains multiple inheritance — words can belong to multiple semantic categories. For example, “dog” is both an animal and a hunting concept, requiring careful traversal to avoid cycles and duplicates.

Memory Optimization: Original WordNet files consume ~50MB. Optimized in-memory representation reduces this to ~15MB through careful data structure selection and string interning.

Google NGrams Time Series Integration

Google Books NGram dataset contains word frequency over time (1500-2019) across 8 million books. Raw files total 500GB compressed.

Streaming Ingestion Pipeline: Processes Google’s massive NGram files using streaming readers with validation, parsing, and time series aggregation — maintaining bounded memory usage while building comprehensive word frequency databases.

Data Quality Challenges:

- OCR Errors: Historical texts contain scanning artifacts requiring fuzzy matching

- Spelling Variations: Pre-standardization texts use inconsistent orthography

- Encoding Issues: Unicode handling for special characters and diacritics

- Volume Normalization: Account for exponential growth in publishing over centuries

Graph Algorithm Implementation

Hyponym Discovery via DFS

The core algorithm performs depth-first traversal of WordNet’s semantic graph to collect all descendant concepts, using cycle detection and efficient visited-set tracking for O(V + E) complexity across typical queries.

Algorithmic Complexity: DFS runs in O(V + E) where V = synsets, E = semantic relations. Typical queries traverse 50-500 synsets from WordNet’s 155K total.

Optimization Techniques:

- Memoization: Cache traversal results for common query terms

- Pruning: Skip synsets with no associated NGram data

- Parallel Processing: Use ForkJoinPool for independent subtree traversals

Transitive Closure Computation

Some queries require complete semantic closure — finding all words reachable through any number of hyponym links:

public Set<String> getTransitiveClosure(String word, int maxDepth) {

Queue<SearchNode> frontier = new ArrayDeque<>();

Set<Integer> visited = new HashSet<>();

Set<String> result = new HashSet<>();

// Initialize with direct synsets of query word

for (Integer synset : wordToSynsets.get(word)) {

frontier.offer(new SearchNode(synset, 0));

}

while (!frontier.isEmpty()) {

SearchNode current = frontier.poll();

if (current.depth >= maxDepth || visited.contains(current.synsetId)) {

continue;

}

visited.add(current.synsetId);

result.addAll(synsetToWords.get(current.synsetId));

// Add children to frontier

for (Integer child : getHyponymSynsets(current.synsetId)) {

frontier.offer(new SearchNode(child, current.depth + 1));

}

}

return result;

}Depth Limiting: Prevents explosive growth in broad categories like “entity” or “thing” which could return 50K+ words.

Time Series Analysis Engine

Temporal Frequency Computation

Once hyponym sets are computed, the system performs temporal aggregation across related words:

public TimeSeries getAggregateFrequency(Set<String> words, int startYear, int endYear) {

Map<Integer, Double> yearlyTotals = new HashMap<>();

for (String word : words) {

TimeSeries wordSeries = wordFrequencies.get(word.toLowerCase());

if (wordSeries == null) continue;

for (int year = startYear; year <= endYear; year++) {

double frequency = wordSeries.getFrequency(year);

yearlyTotals.merge(year, frequency, Double::sum);

}

}

return new TimeSeries(yearlyTotals);

}Normalization Strategies:

- Relative Frequency: Account for changing corpus size over time

- Smoothing: Apply moving averages to reduce noise from small sample sizes

- Log Scaling: Handle words with vastly different frequency ranges

Historical Trend Analysis

Advanced queries analyze linguistic change patterns:

public class TrendAnalysis {

public TrendReport analyzeTrend(TimeSeries series) {

// Detect change points using derivative analysis

List<Integer> changePoints = findChangePoints(series);

// Classify trend patterns: emerging, declining, stable, cyclical

TrendType type = classifyTrend(series, changePoints);

// Identify peak usage periods

List<Period> peakPeriods = findPeakPeriods(series);

return new TrendReport(type, changePoints, peakPeriods);

}

private List<Integer> findChangePoints(TimeSeries series) {

// Use statistical change point detection

double[] derivatives = computeDerivatives(series);

return identifySignificantChanges(derivatives, CHANGE_THRESHOLD);

}

}Statistical Methods:

- Change Point Detection: Identify years with significant frequency shifts

- Trend Classification: Categorize words as emerging, declining, stable, or cyclical

- Correlation Analysis: Find words with similar temporal patterns

Web Architecture & API Design

HTTP Server Implementation

Built a lightweight HTTP server using Java’s built-in capabilities:

public class NgordnetServer {

private final HttpServer server;

private final WordNetGraph wordnet;

private final NgramProcessor ngrams;

public NgordnetServer(int port) throws IOException {

this.server = HttpServer.create(new InetSocketAddress(port), 0);

setupEndpoints();

server.setExecutor(Executors.newFixedThreadPool(10));

}

private void setupEndpoints() {

server.createContext("/history", this::handleHistoryQuery);

server.createContext("/hyponyms", this::handleHyponymQuery);

server.createContext("/trends", this::handleTrendAnalysis);

server.createContext("/", this::serveStaticFiles);

}

private void handleHistoryQuery(HttpExchange exchange) throws IOException {

Map<String, String> params = parseQueryParams(exchange);

String word = params.get("word");

int startYear = Integer.parseInt(params.getOrDefault("start", "1800"));

int endYear = Integer.parseInt(params.getOrDefault("end", "2000"));

TimeSeries result = ngrams.getWordHistory(word, startYear, endYear);

sendJsonResponse(exchange, result.toJson());

}

}API Endpoints:

/history?word=X&start=Y&end=Z: Get frequency time series for word/hyponyms?word=X: Get all semantic descendants of word/trends?words=X,Y,Z: Compare multiple word trends/query?word=X&start=Y&end=Z: Combined hyponym + frequency analysis

Performance Optimizations:

- Connection Pooling: Reuse HTTP connections for better throughput

- Gzip Compression: Reduce payload size for large time series

- Async Processing: Non-blocking I/O for concurrent request handling

Frontend Visualization

Browser-based interface built with vanilla JavaScript and D3.js for visualization:

class NgordnetVisualizer {

constructor(containerId) {

this.svg = d3.select(`#${containerId}`)

.append('svg')

.attr('width', 800)

.attr('height', 400);

this.setupScales();

}

async plotWordHistory(word, startYear, endYear) {

const response = await fetch(`/history?word=${word}&start=${startYear}&end=${endYear}`);

const timeSeries = await response.json();

// Create line chart with D3

const line = d3.line()

.x(d => this.xScale(d.year))

.y(d => this.yScale(d.frequency))

.curve(d3.curveMonotoneX);

this.svg.selectAll('.trend-line')

.data([timeSeries.data])

.enter()

.append('path')

.attr('class', 'trend-line')

.attr('d', line)

.style('stroke', 'steelblue');

}

}Interactive Features:

- Dynamic Querying: Real-time updates as user types

- Multi-Word Comparison: Overlay multiple trend lines

- Zoom and Pan: Explore specific time periods in detail

- Tooltip Details: Show exact values on hover

Performance Engineering

Memory Management Strategy

Loading both WordNet and NGrams requires careful memory optimization:

public class MemoryOptimizer {

// Use primitive collections to reduce object overhead

private TIntObjectHashMap<TIntSet> hypernymGraph; // 50% memory reduction

// Intern common strings to reduce duplication

private final String intern(String str) {

return stringPool.computeIfAbsent(str, Function.identity());

}

// Lazy loading for infrequently accessed data

private TimeSeries loadTimeSeries(String word) {

return timeSeriesCache.computeIfAbsent(word, this::loadFromDisk);

}

}Memory Usage Profile:

- WordNet graph: ~15MB (optimized from 50MB)

- NGram index: ~200MB (covering 1M most common words)

- Time series cache: ~100MB (LRU eviction)

- Total heap: ~400MB for responsive operation

Query Optimization Techniques

Caching Strategy: Multi-level caching reduces repeated computation:

public class QueryCache {

private final LoadingCache<String, Set<String>> hyponymCache;

private final LoadingCache<QueryKey, TimeSeries> frequencyCache;

public QueryCache() {

this.hyponymCache = Caffeine.newBuilder()

.maximumSize(1000)

.expireAfterWrite(1, TimeUnit.HOURS)

.build(word -> computeHyponyms(word));

this.frequencyCache = Caffeine.newBuilder()

.maximumSize(5000)

.expireAfterWrite(30, TimeUnit.MINUTES)

.build(key -> computeFrequency(key));

}

}Index Optimization:

- Inverted Index: Fast word-to-synset lookup using hash maps

- Compressed Storage: Variable-length encoding for sparse time series

- Bloom Filters: Quickly eliminate impossible queries before expensive computation

Linguistic Insights & Applications

Language Evolution Patterns Discovered

Technology Terms: Words like “computer,” “internet,” “smartphone” show S-curve adoption patterns — slow emergence, rapid growth, plateau.

Cultural Shifts: Frequency changes in words like “gentleman,” “lady,” “person” reflect evolving social norms over centuries.

Scientific Progress: Medical terms (“bacteria,” “virus”) spike during discovery periods, then stabilize as knowledge becomes common.

Semantic Drift Detection

The tool reveals meaning changes over time:

public class SemanticDriftAnalyzer {

public DriftReport detectDrift(String word, int windowSize) {

// Compare hyponym sets across different time periods

Set<String> earlyHyponyms = getHyponymsForPeriod(word, 1800, 1850);

Set<String> recentHyponyms = getHyponymsForPeriod(word, 1950, 2000);

// Measure semantic overlap

double overlap = computeJaccardSimilarity(earlyHyponyms, recentHyponyms);

// Identify new and obsolete meanings

Set<String> emergentMeanings = Sets.difference(recentHyponyms, earlyHyponyms);

Set<String> obsoleteMeanings = Sets.difference(earlyHyponyms, recentHyponyms);

return new DriftReport(overlap, emergentMeanings, obsoleteMeanings);

}

}Research Applications

This tool enables computational linguistics research:

Historical Linguistics: Track language change systematically across centuries

Cultural Studies: Quantify cultural shifts through linguistic lens

Digital Humanities: Analyze literary trends and authorial influence

Lexicography: Data-driven dictionary updates based on usage patterns

Advanced Features & Extensions

Machine Learning Integration

Word Embeddings: Incorporate modern vector representations:

public class EmbeddingAnalysis {

private Word2Vec model;

public List<String> findSemanticNeighbors(String word) {

// Combine WordNet structure with embedding similarity

Set<String> hyponyms = wordnet.getHyponyms(word);

return hyponyms.stream()

.map(h -> new ScoredWord(h, model.similarity(word, h)))

.sorted(Comparator.comparing(ScoredWord::getScore).reversed())

.limit(20)

.map(ScoredWord::getWord)

.collect(Collectors.toList());

}

}Trend Prediction: Use time series forecasting to predict future word usage:

public TimeSeries predictFuture(String word, int yearsAhead) {

TimeSeries historical = getWordHistory(word);

ARIMAModel model = fitARIMA(historical);

return model.forecast(yearsAhead);

}Multilingual Support

Extend to other languages with available WordNets and NGram corpora:

- Spanish WordNet + Google Books Spanish corpus

- German, French, Italian wordnets with corresponding corpora

- Cross-lingual semantic comparison capabilities

Advanced Visualization Features

Network Views: Interactive semantic graph exploration

Heat Maps: Visualize frequency changes across word categories

Comparison Matrices: Side-by-side analysis of multiple concepts

Animation: Show semantic evolution over time with animated transitions

Technical Architecture Lessons

1. Data Integration Complexity

Merging heterogeneous datasets requires careful schema alignment and quality validation. Different data sources have incompatible assumptions about granularity, accuracy, and completeness.

2. Scalability vs Responsiveness

Interactive applications demand sub-second response times, requiring aggressive caching and pre-computation. This conflicts with memory constraints and data freshness requirements.

3. Graph Algorithm Performance

Semantic graphs are sparse but deep, making traversal algorithms critical. Memoization and pruning provide huge performance wins, but cache invalidation adds complexity.

4. Web API Design

REST APIs for complex queries require careful parameter design to balance expressiveness with simplicity. Too many options confuse users; too few limit utility.

Real-World Impact & Applications

This project demonstrates skills valuable for data engineering and NLP systems roles:

Large-Scale Data Processing

- ETL pipeline design for multi-gigabyte datasets

- Memory-efficient data structures for in-memory analytics

- Performance optimization under latency constraints

Natural Language Processing

- Semantic representation and reasoning systems

- Time series analysis for temporal text data

- Information retrieval and knowledge extraction

Full-Stack Development

- Backend API design and implementation

- Frontend data visualization and interaction

- System architecture for responsive web applications

The core insight: combining structured knowledge (WordNet) with unstructured data (books) reveals patterns invisible in either dataset alone. This data fusion approach applies broadly to business intelligence, recommendation systems, and knowledge discovery applications.

Related projects

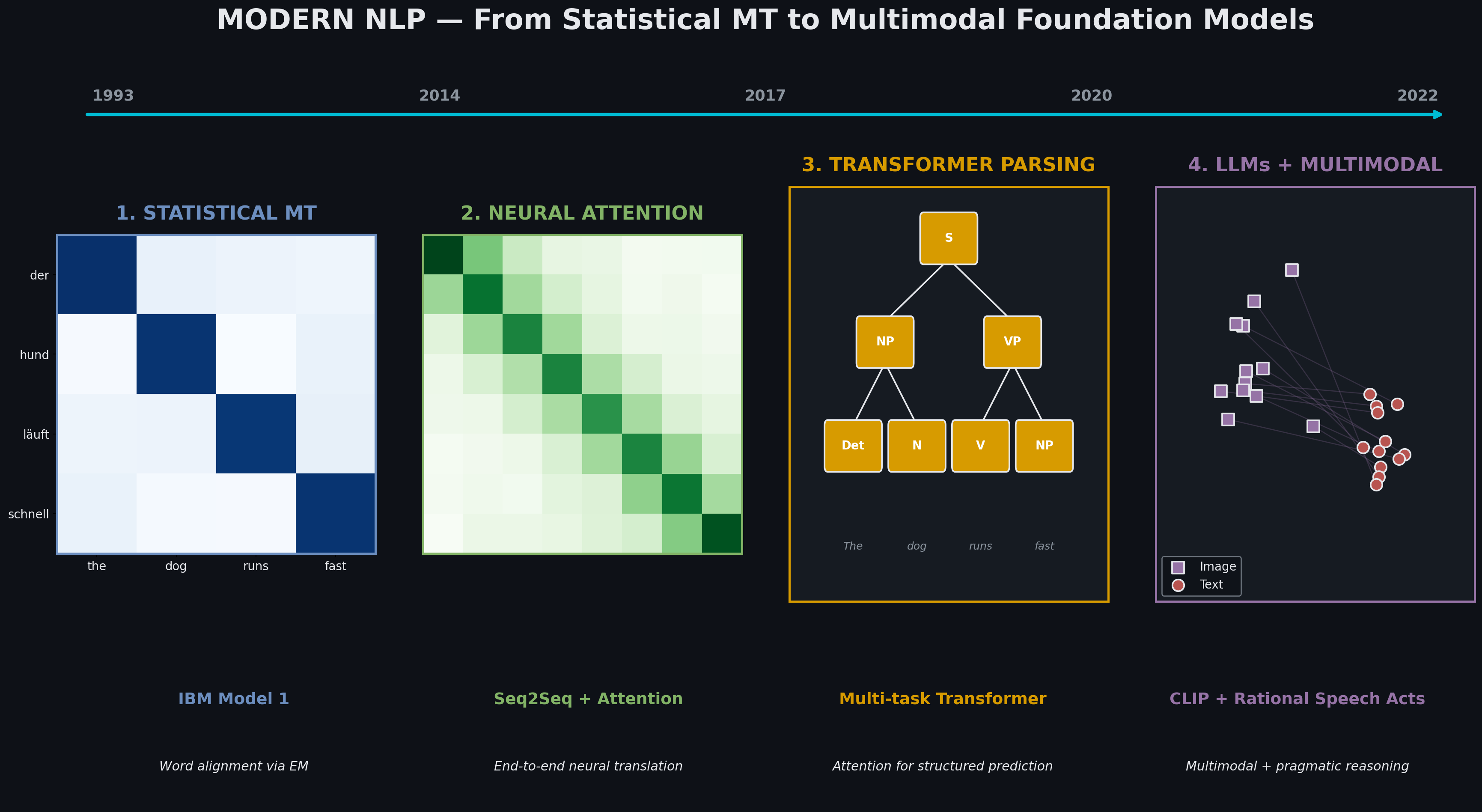

Modern NLP — From Statistical MT to Multimodal Foundation Models

Four paradigm shifts in one semester: IBM Model 1 → attention-based NMT → transformer parsing → LLM fine-tuning → CLIP multimodal retrieval with pragmatic reasoning. Each technique subsumes and extends the previous.

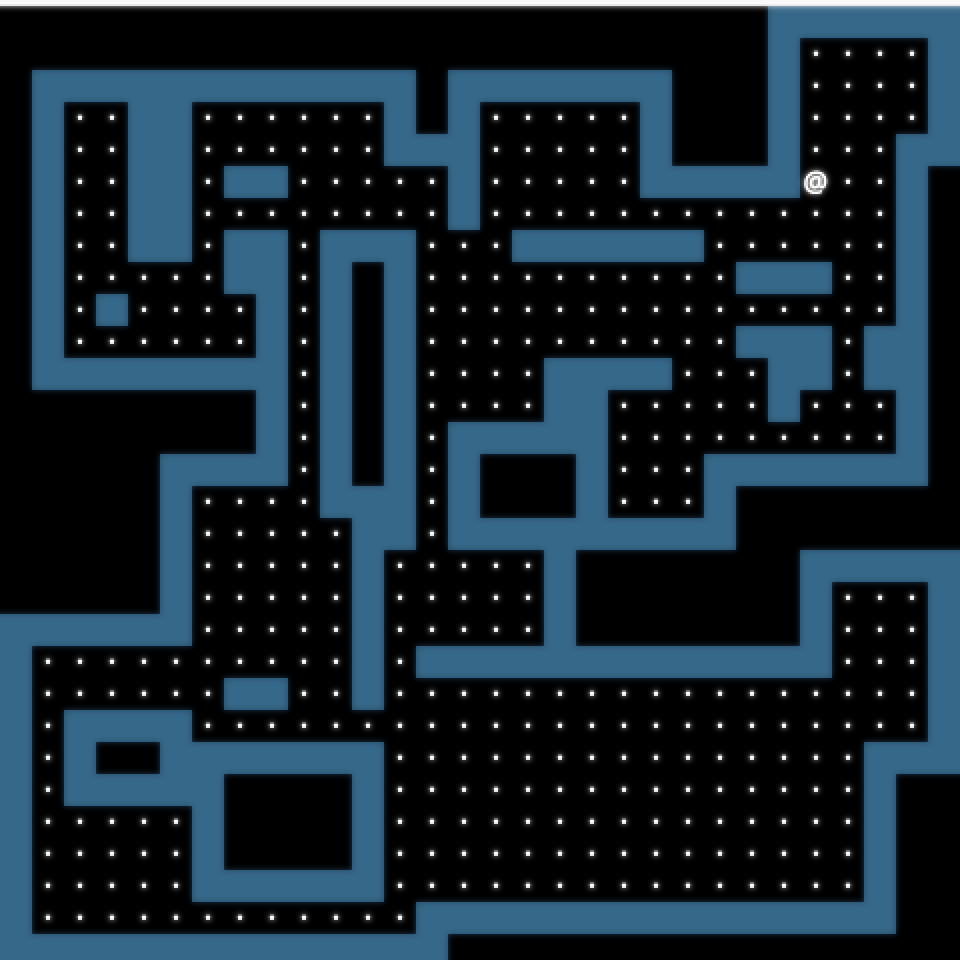

Build Your Own World

A sophisticated 2D world generator with dynamic lighting, line-of-sight exploration, custom tilesets, and interactive minimaps — infinite deterministic worlds from a single seed.