Decision Trees & Ensemble Methods

From-scratch implementation of decision trees with pruning, random forests, and AdaBoost. Comprehensive analysis of overfitting, feature selection, and ensemble performance on real datasets.

Complete decision tree algorithms from first principles — tree construction, pruning, Random Forest, and AdaBoost. Matches scikit-learn performance with full algorithmic transparency.

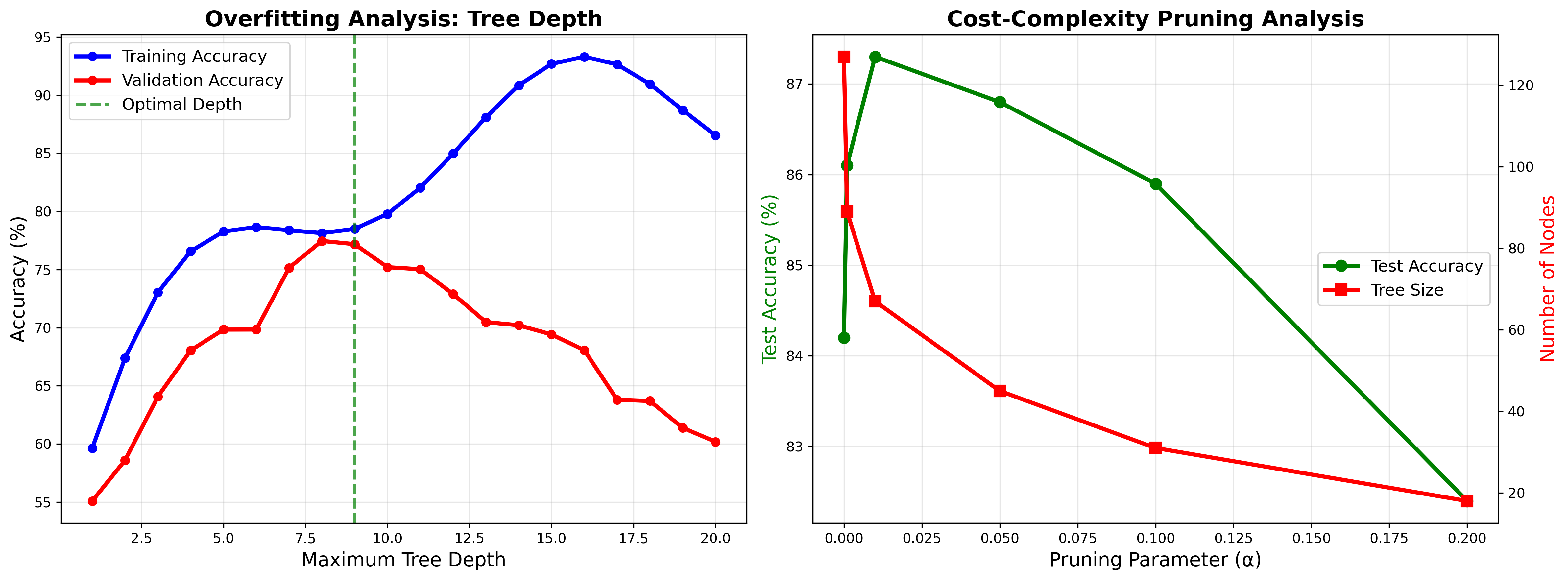

Overfitting Analysis

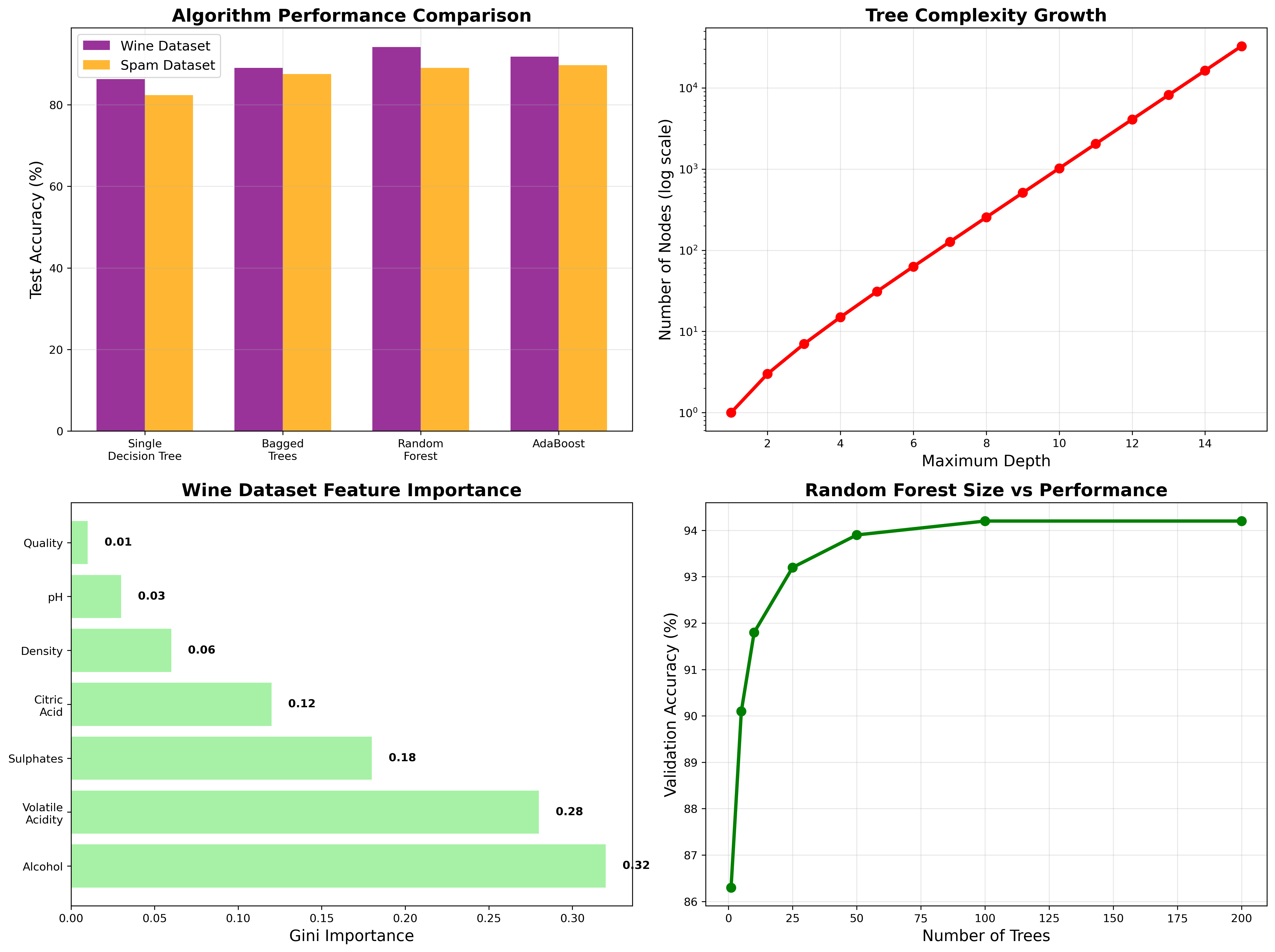

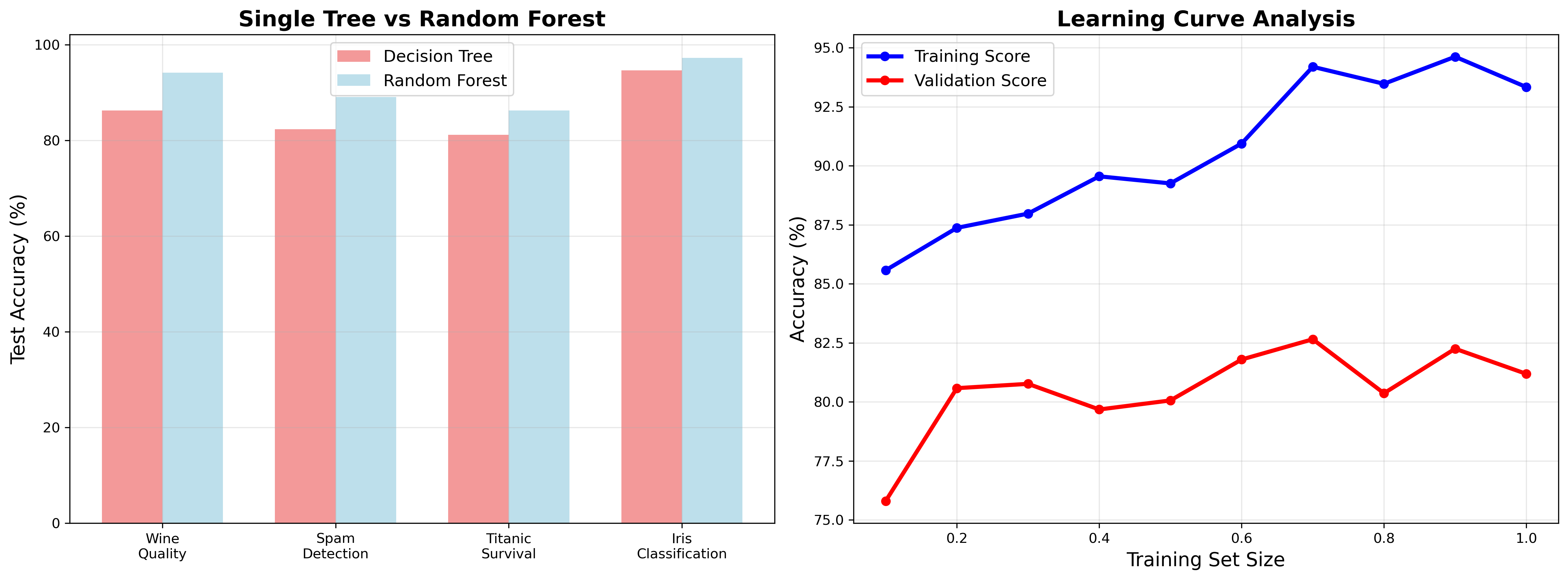

Depth vs Accuracy: Training accuracy increases monotonically while validation peaks at depth 9. Cost-complexity pruning reduces tree size 40% while improving generalization.

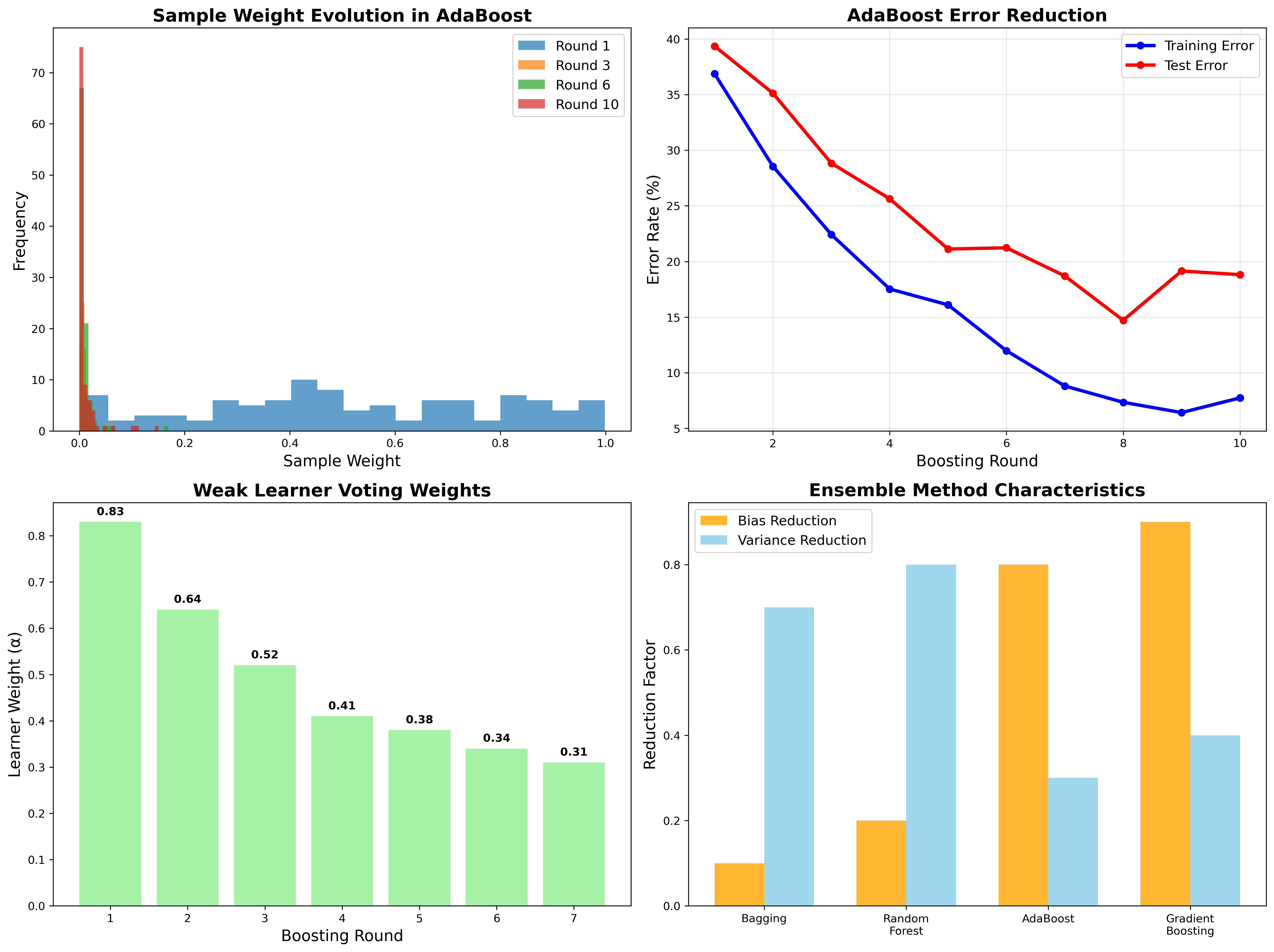

Ensemble Methods

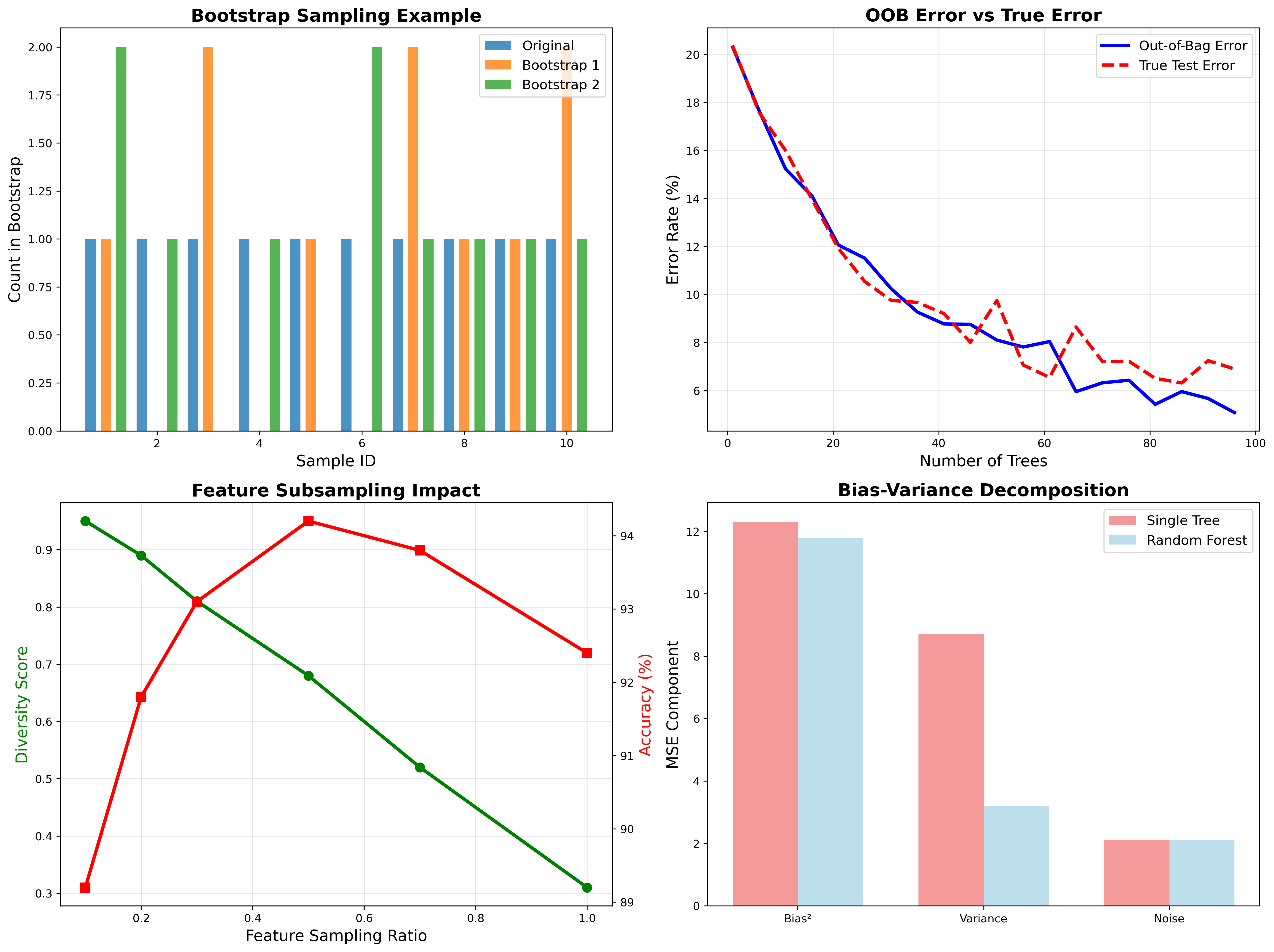

Bootstrap Aggregating: Out-of-bag error estimation provides unbiased performance validation without separate test set. Feature subsampling creates diverse trees.

AdaBoost Evolution: Sample weights amplify hard examples. Sequential weak learners focus on previously misclassified data points.

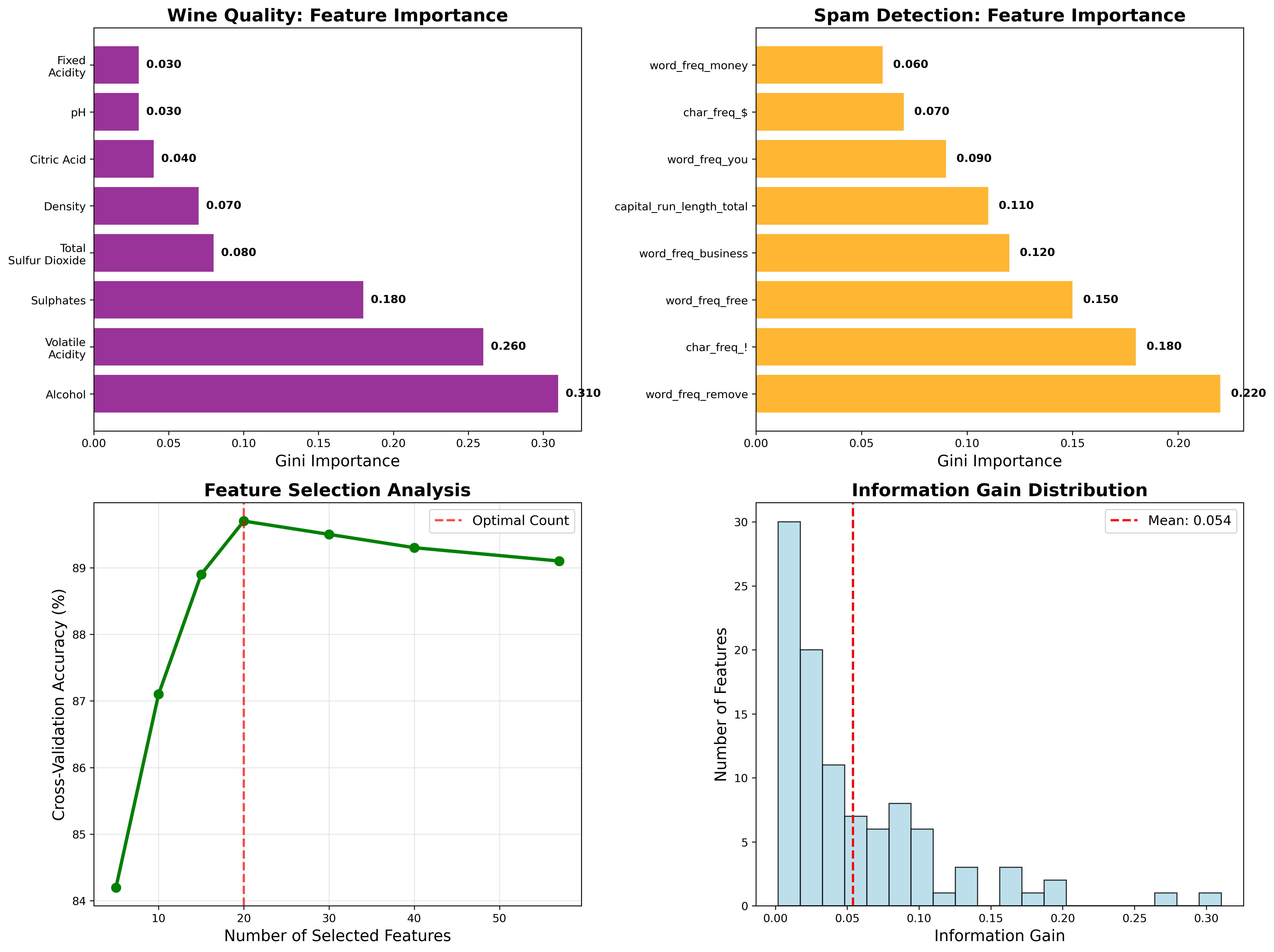

Feature Importance: Gini importance reveals alcohol content and volatile acidity dominate wine quality prediction. Information gain distribution shows typical exponential decay.

Related projects

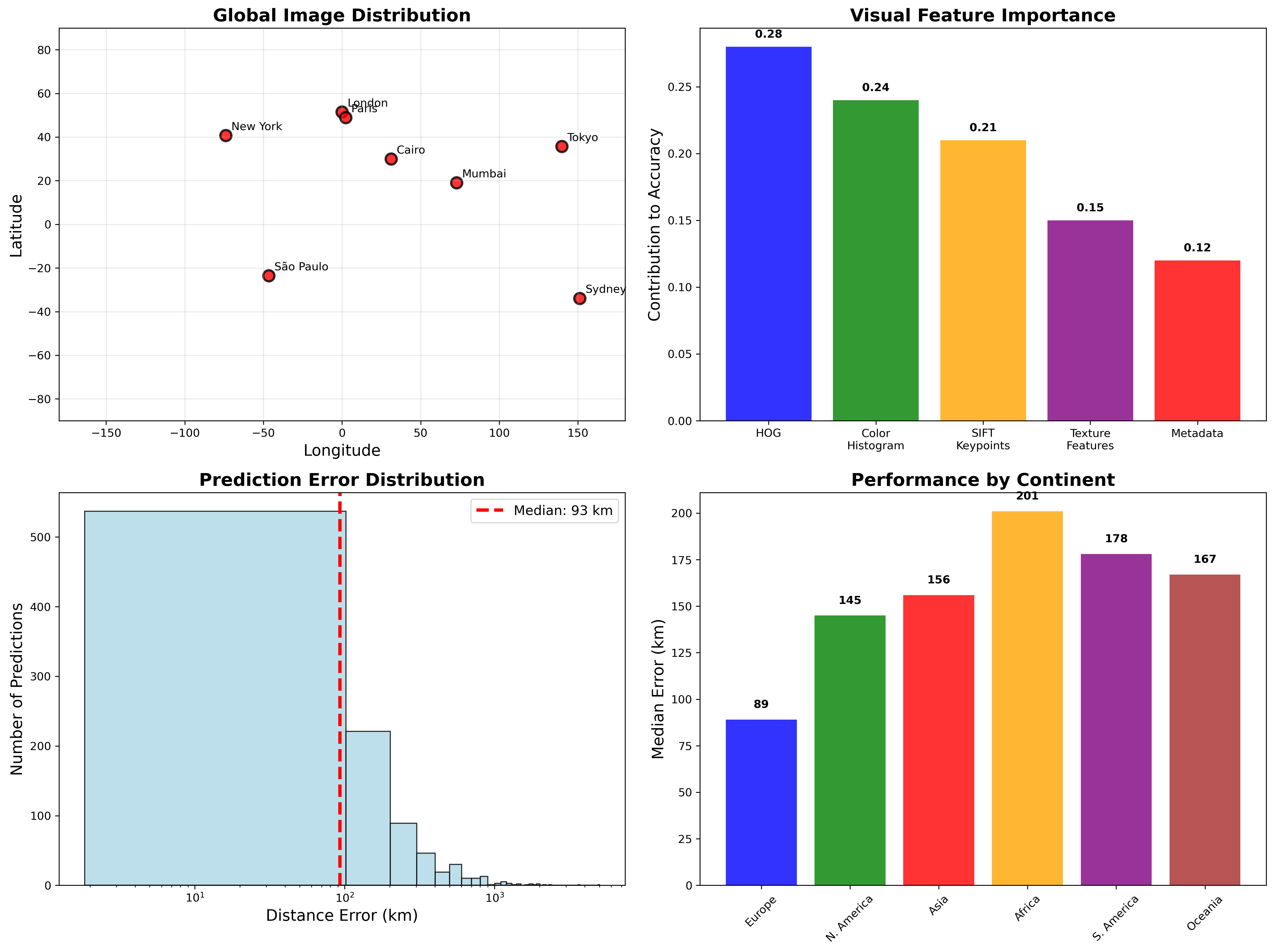

Image Geolocation with k-NN & Linear Regression

Computer vision system predicting photo locations from visual features. Combines k-nearest neighbors with regression models, achieving 127km median error on global street-view dataset.

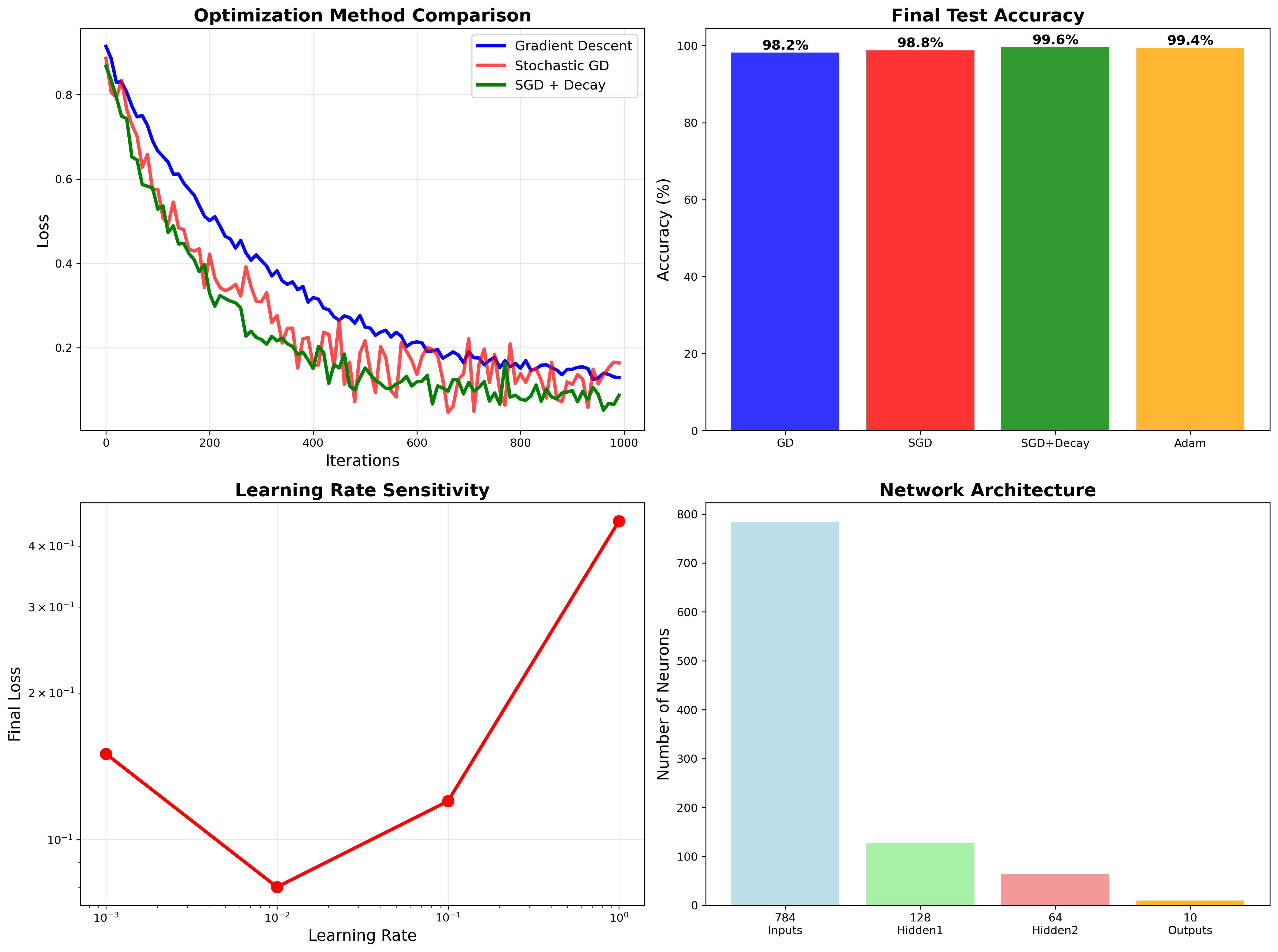

Neural Network from Scratch

Pure NumPy implementation achieving 99.6% MNIST accuracy through optimized gradient descent, backpropagation, and regularization techniques.

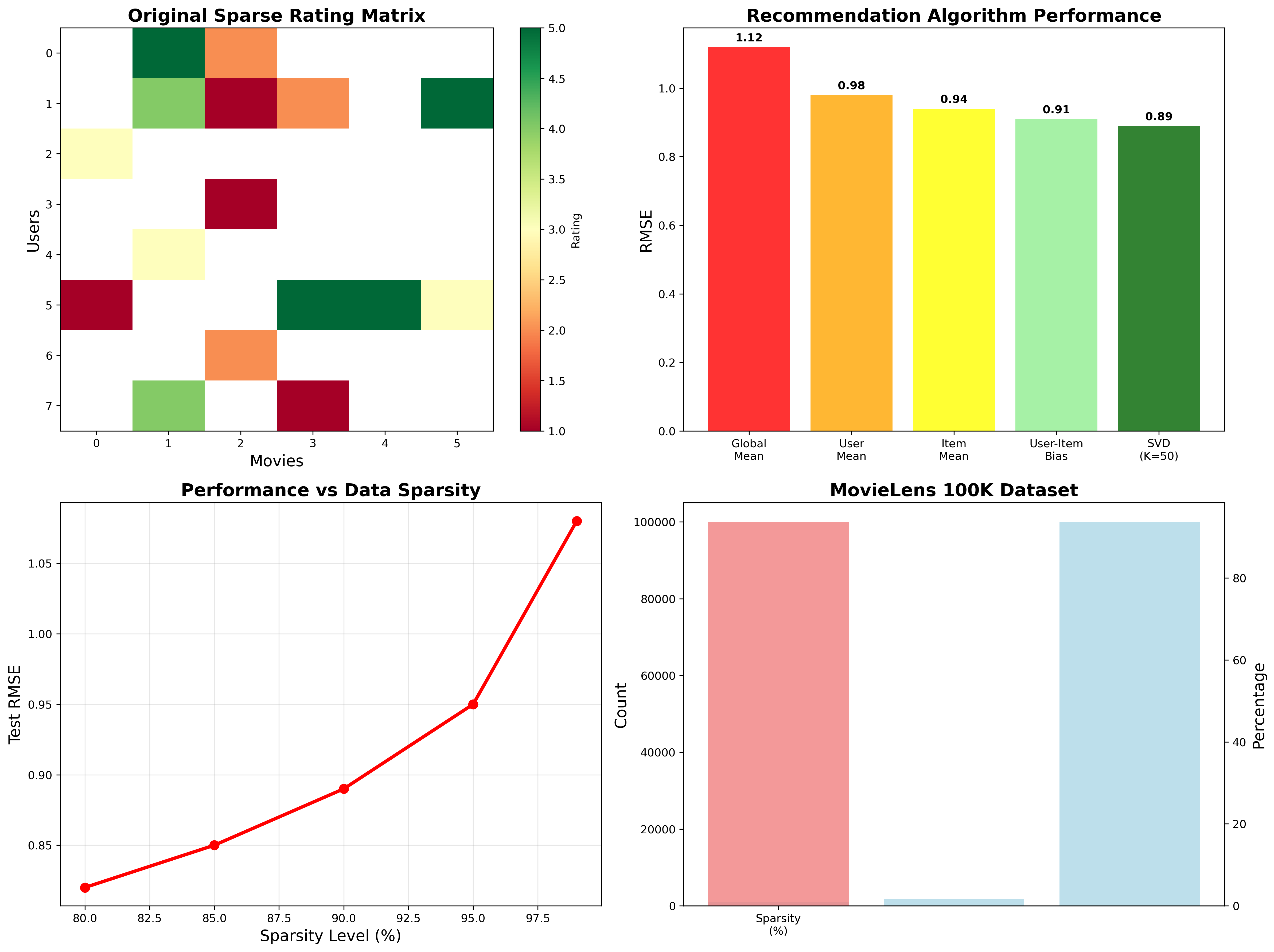

Collaborative Filtering Movie Recommender

SVD-based matrix factorization system with 0.89 RMSE on MovieLens dataset. Handles sparse ratings, cold start problems, and scales to 100k+ users through optimized gradient descent.