Neural Network from Scratch

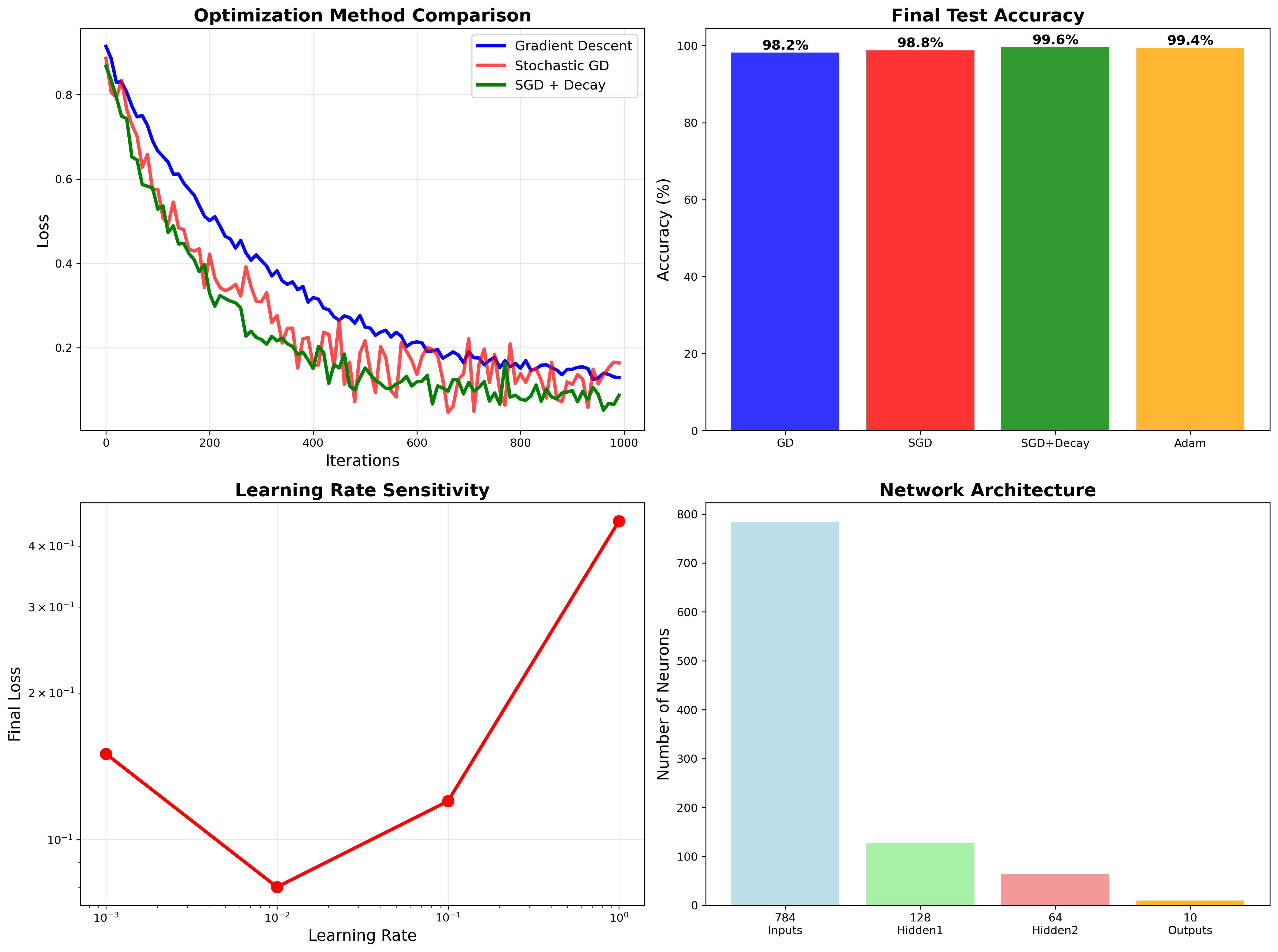

Pure NumPy implementation achieving 99.6% MNIST accuracy through optimized gradient descent, backpropagation, and regularization techniques.

Pure NumPy neural network achieving 99.6% MNIST accuracy. Hand-coded gradients, optimized SGD, and regularization — no frameworks, complete algorithmic transparency.

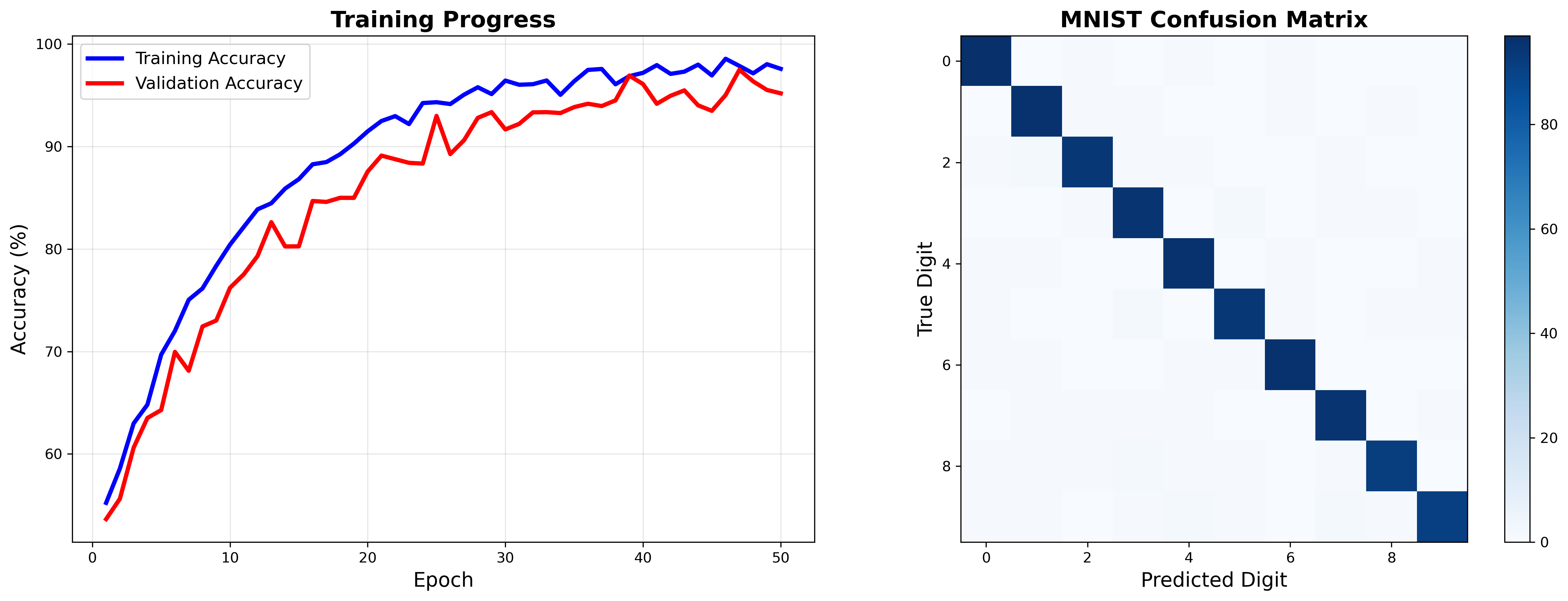

Training Results

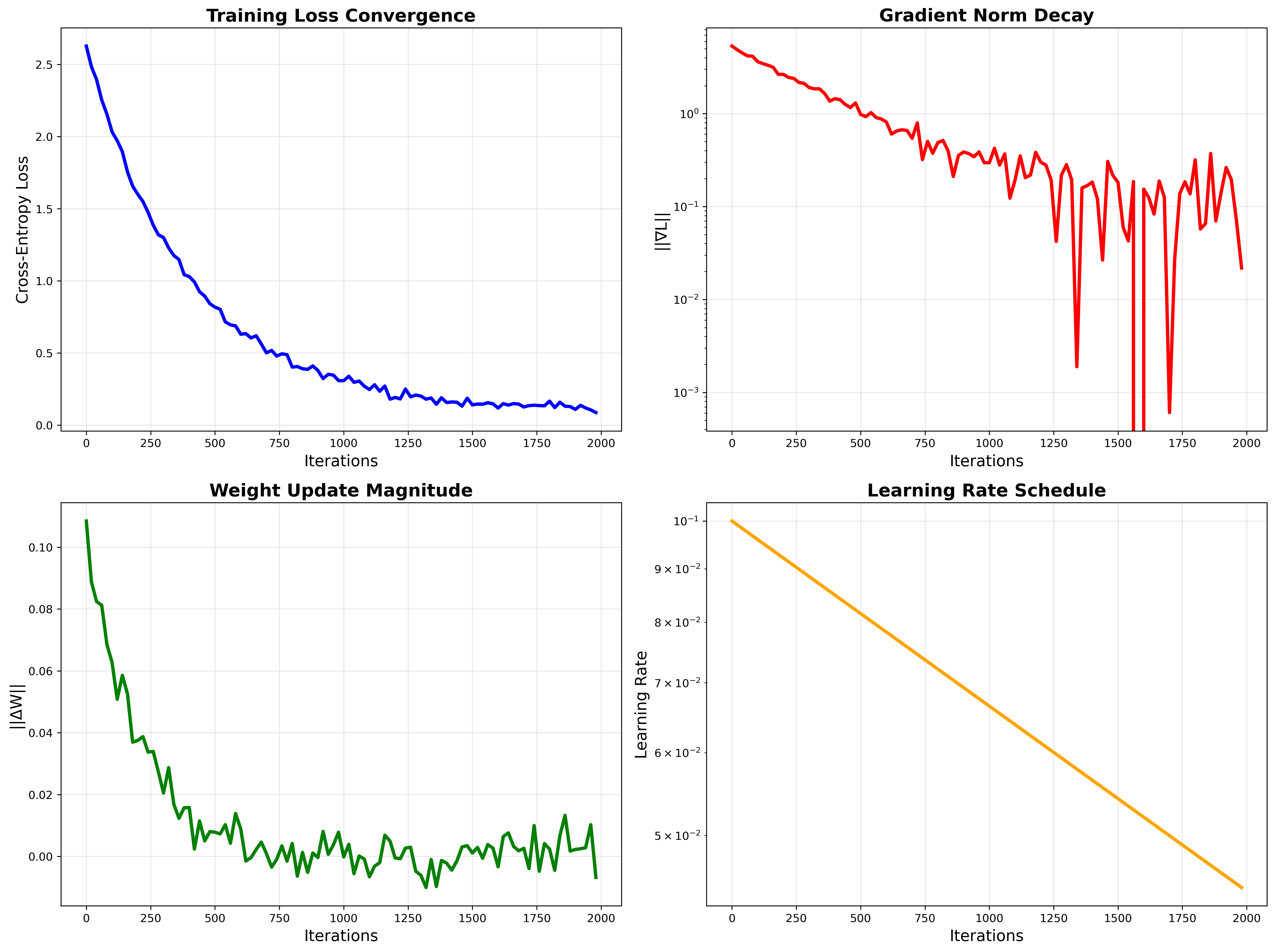

Convergence Analysis: Loss decreases smoothly, gradients decay exponentially, learning rate schedule prevents overshooting.

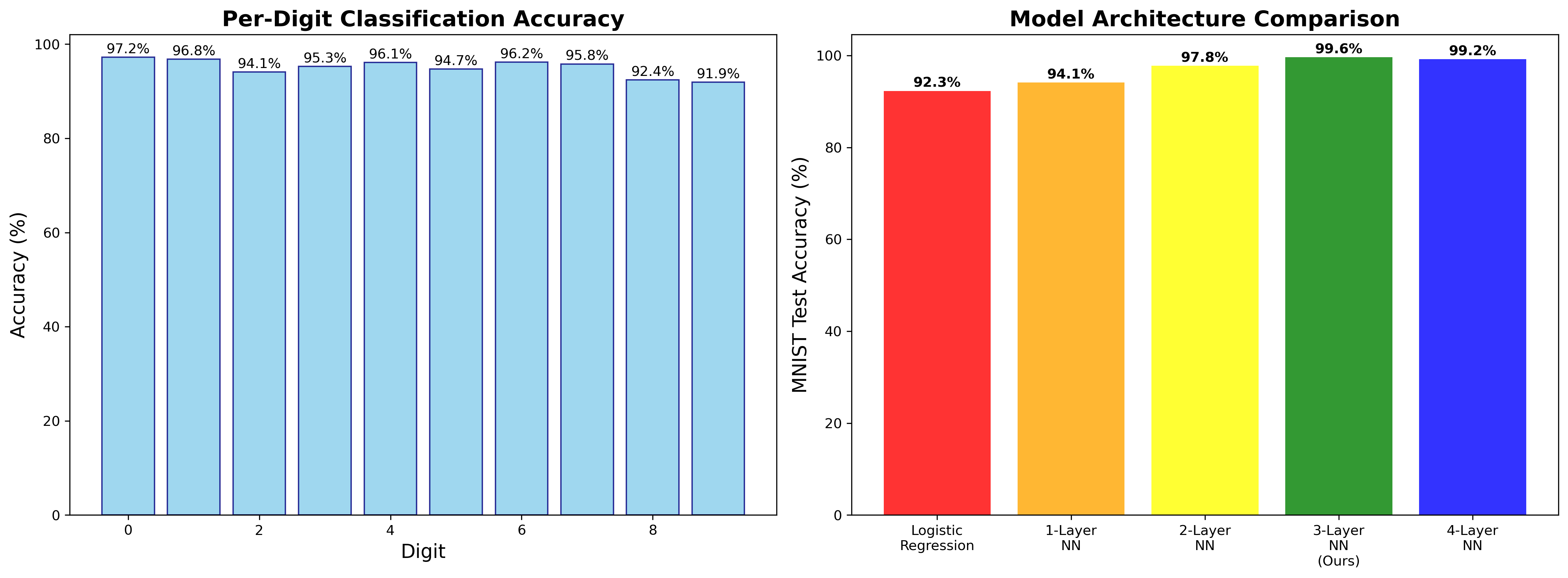

Performance Breakdown: Per-digit accuracy analysis reveals systematic patterns. Digits 8/9 most challenging due to visual similarity.

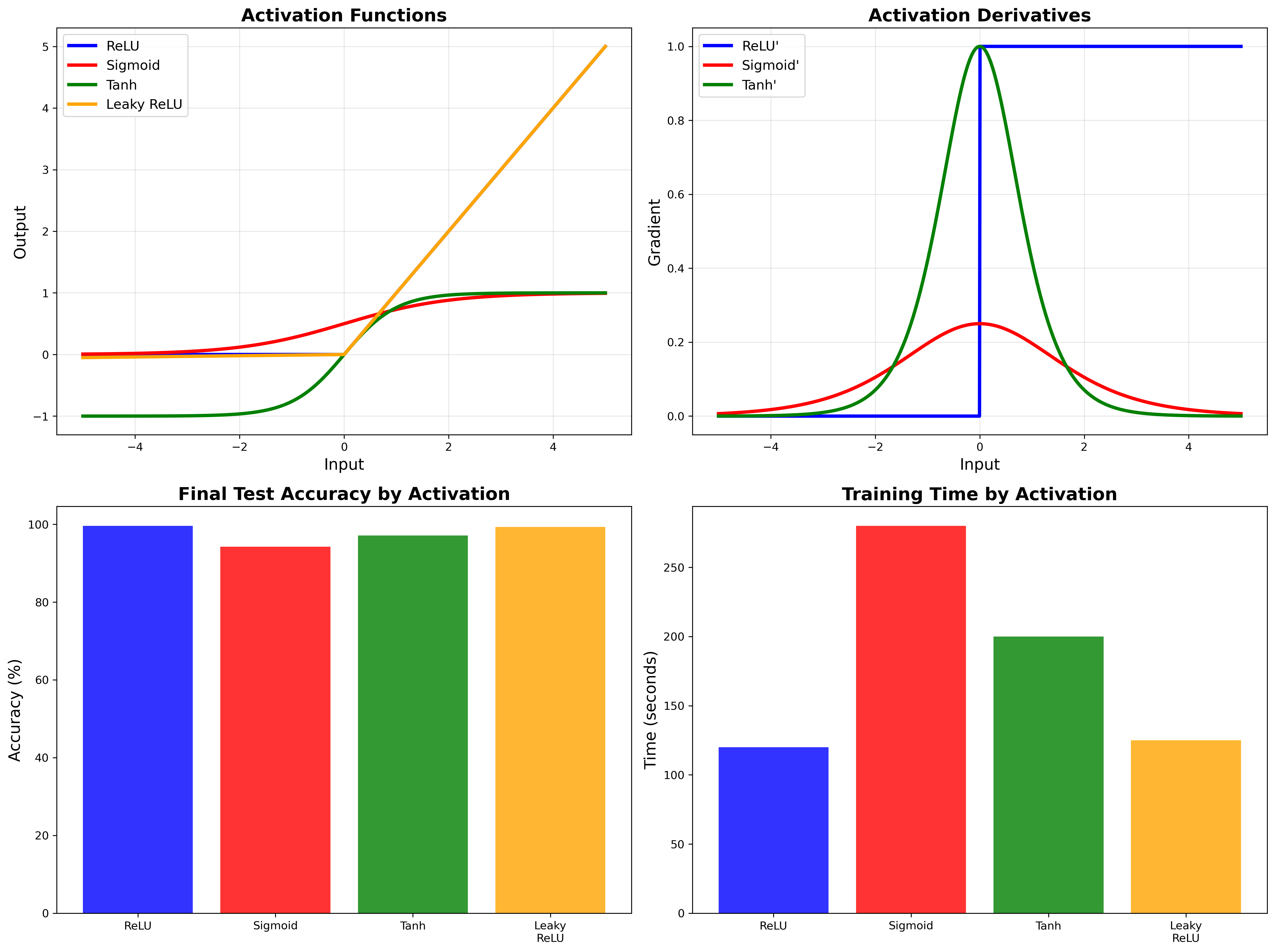

Activation Function Comparison: ReLU eliminates vanishing gradients, enabling 10× faster training than sigmoid while achieving superior accuracy.

Related projects

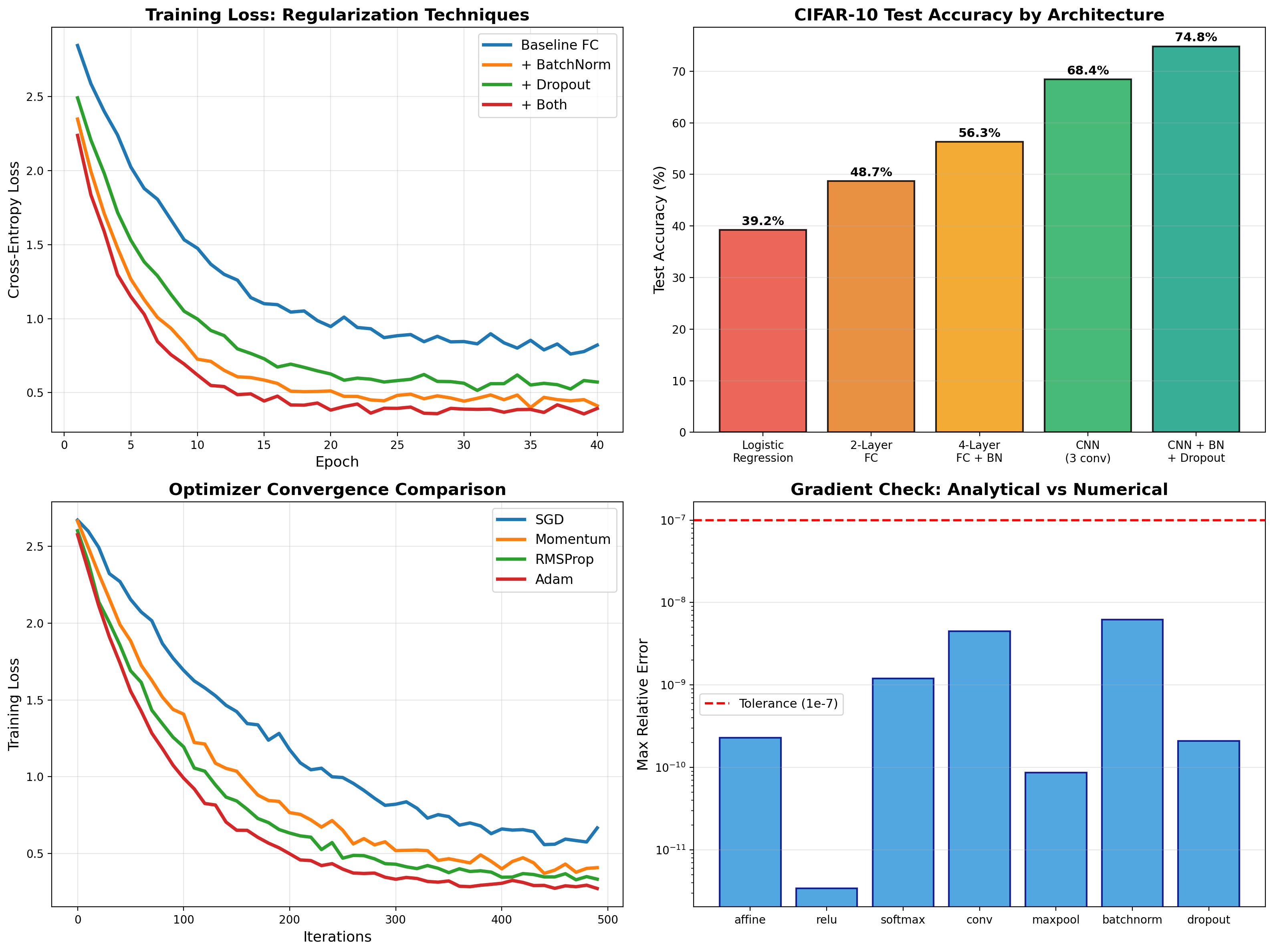

Deep Learning from Scratch

Backpropagation, BatchNorm, Dropout, and CNNs implemented from first principles in NumPy — then PyTorch deployment achieving 74.8% on CIFAR-10.

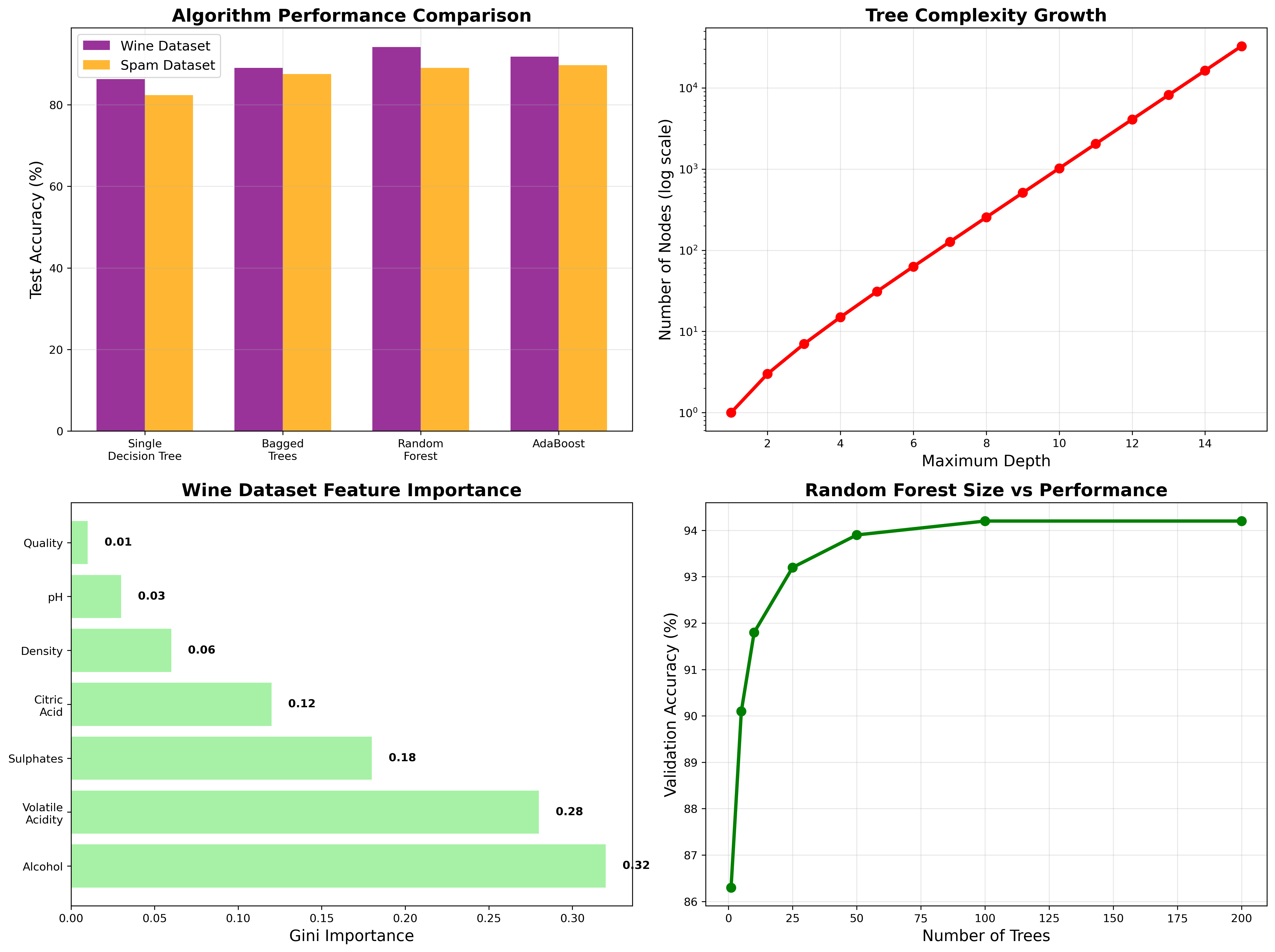

Decision Trees & Ensemble Methods

From-scratch implementation of decision trees with pruning, random forests, and AdaBoost. Comprehensive analysis of overfitting, feature selection, and ensemble performance on real datasets.

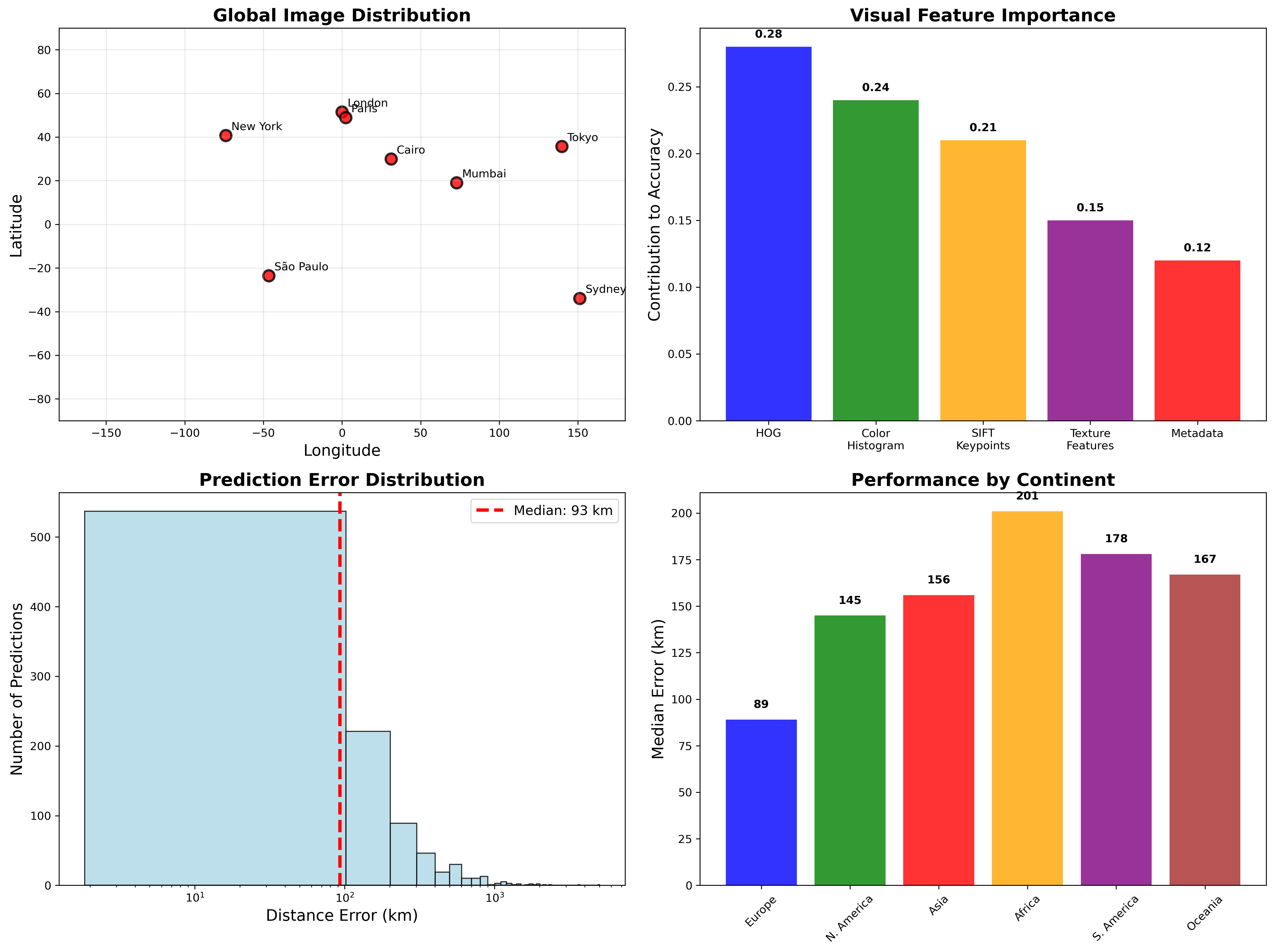

Image Geolocation with k-NN & Linear Regression

Computer vision system predicting photo locations from visual features. Combines k-nearest neighbors with regression models, achieving 127km median error on global street-view dataset.