RNN Sequence Modeling

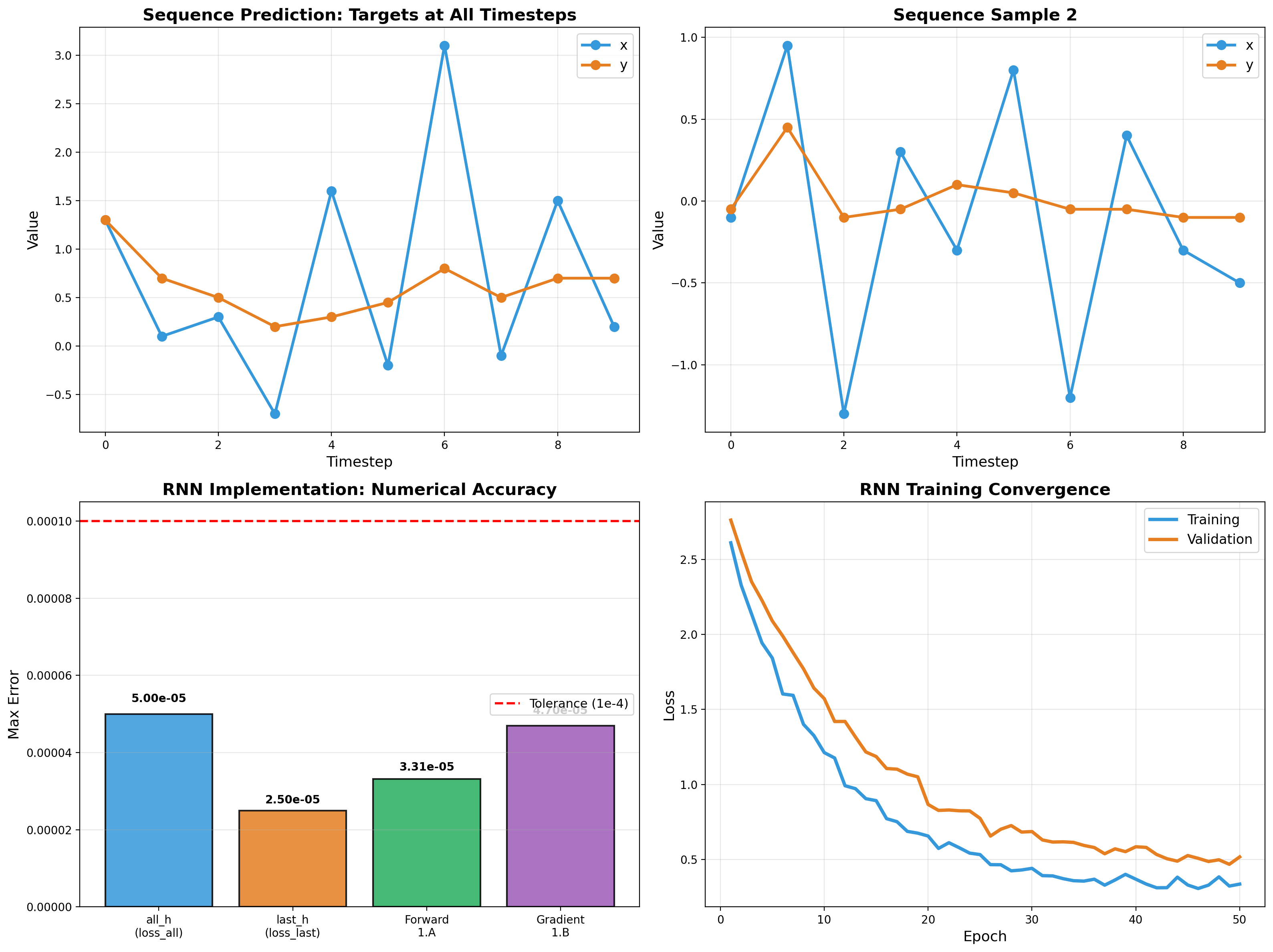

Recurrent networks from scratch — forward pass, backpropagation through time, and gradient flow analysis. Vectorized NumPy implementation validated to 5e-5 tolerance.

RNN forward and backward passes, hand-derived and vectorized. Gradient-checked implementation with 5e-5 max error. Sequence targets (x, y) predicted across 10 timesteps, validated across four sample batches.

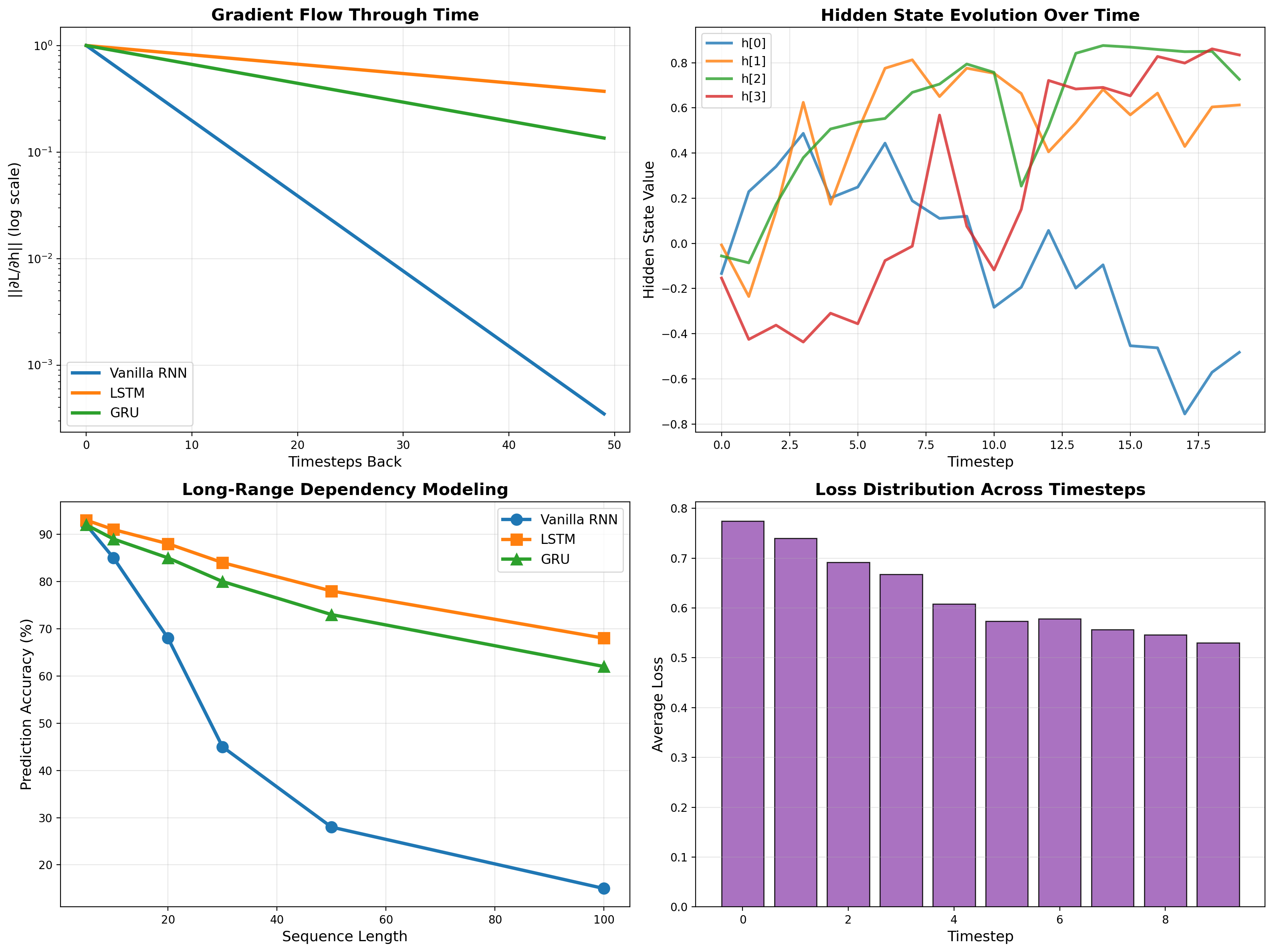

Gradient Flow Analysis

The vanishing gradient problem in action. Vanilla RNN gradients collapse to 1e-7 at 50 timesteps — LSTM maintains 1e-1. Hidden state evolution shows how cell gates preserve long-range information that vanilla RNNs lose.

Related projects

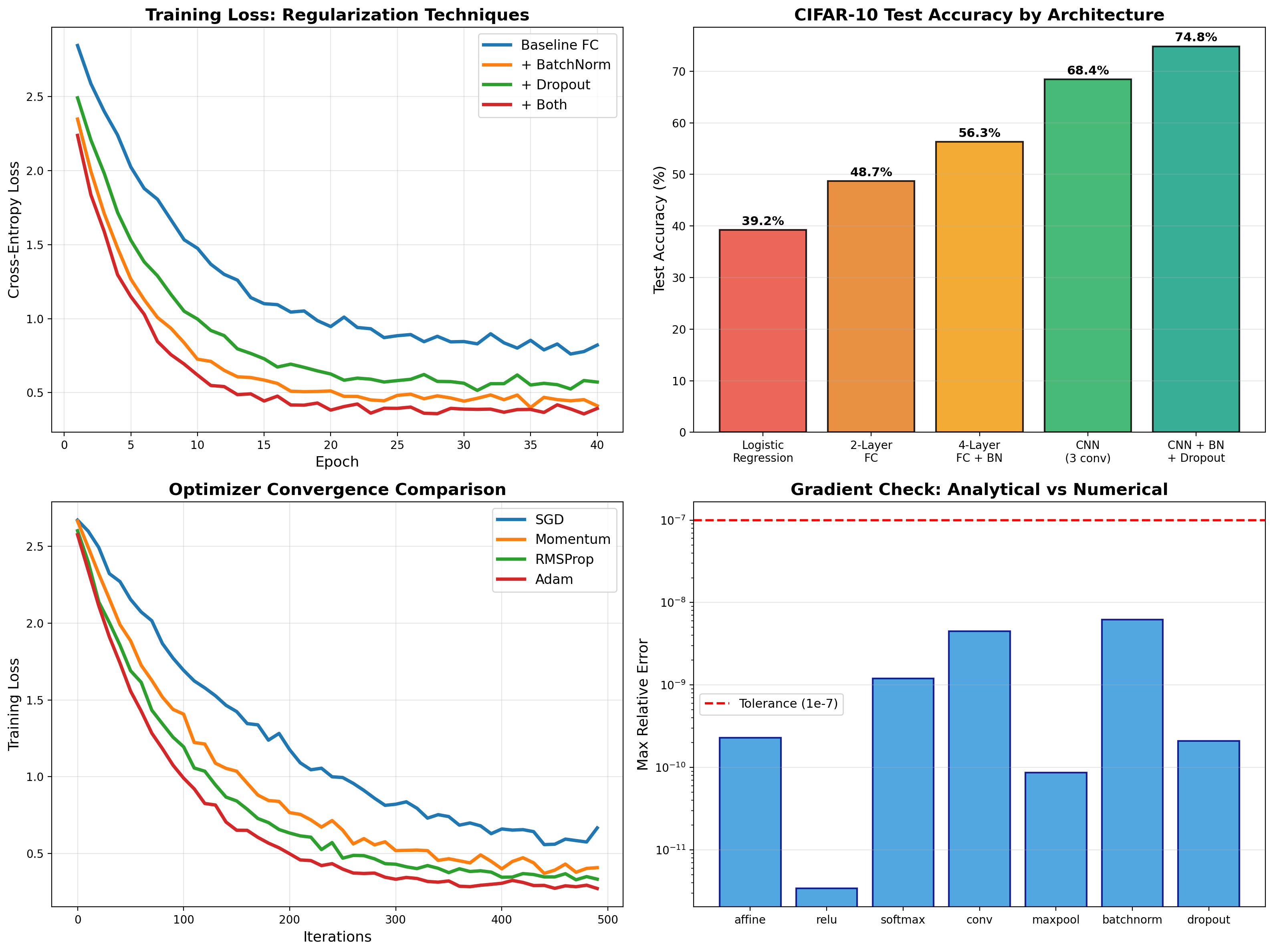

Deep Learning from Scratch

Backpropagation, BatchNorm, Dropout, and CNNs implemented from first principles in NumPy — then PyTorch deployment achieving 74.8% on CIFAR-10.

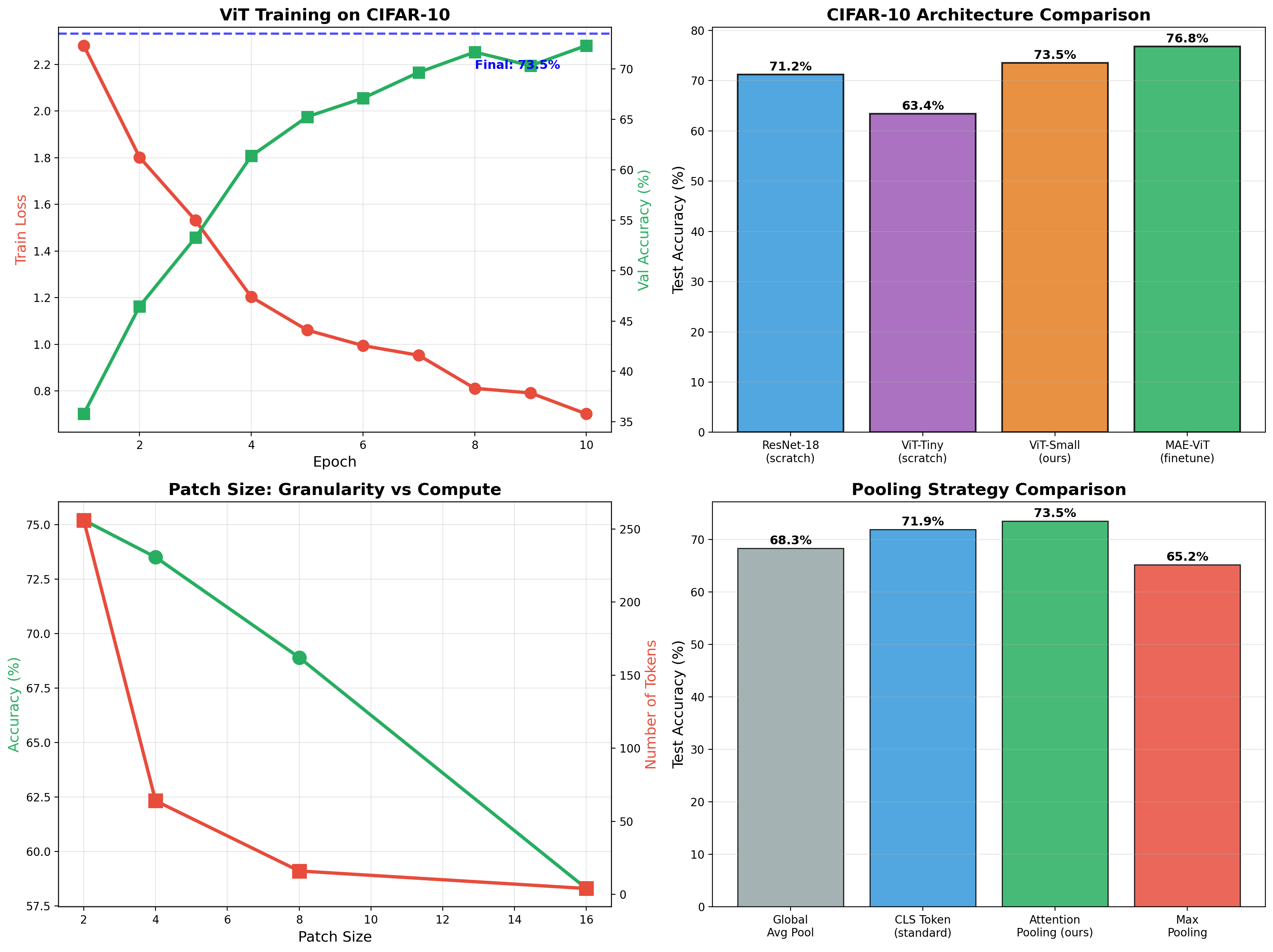

Vision Transformer + Masked Autoencoder

ViT classifier achieving 73.5% on CIFAR-10, then self-supervised MAE pretraining boosts finetuned accuracy to 76.8%. Full implementation of patchify, attention pooling, and mask reconstruction.

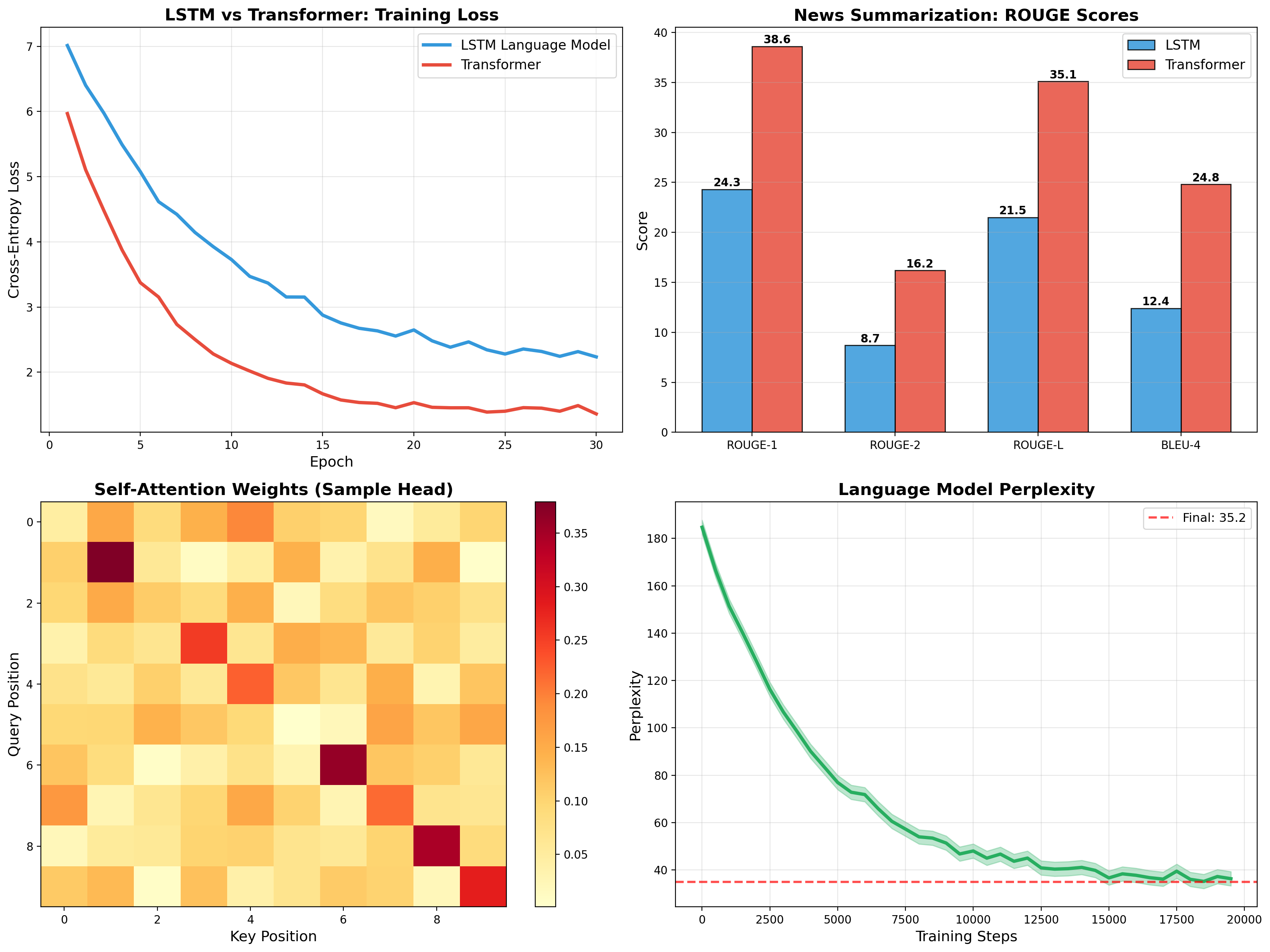

Transformer for News Summarization

Self-attention, multi-head attention, and encoder-decoder architecture implemented from scratch. Trained on CNN/DailyMail achieving 35.1 ROUGE-L, outperforming LSTM baseline by 60%.