Deep Learning from Scratch

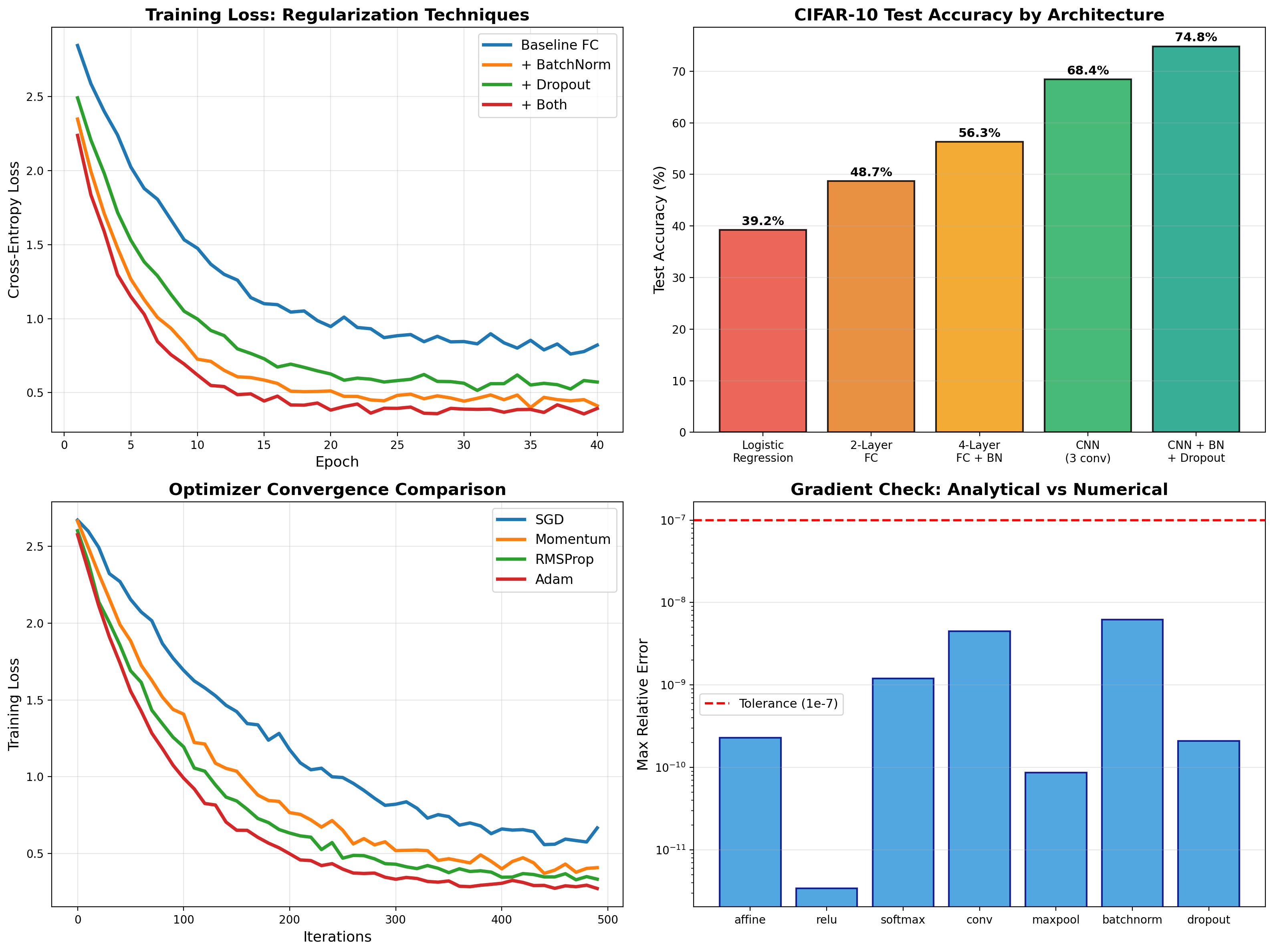

Backpropagation, BatchNorm, Dropout, and CNNs implemented from first principles in NumPy — then PyTorch deployment achieving 74.8% on CIFAR-10.

Every component of modern deep learning, hand-coded. Vectorized backprop, BatchNorm, Dropout, CNN layers — gradient-checked to 1e-9 tolerance. Deployed to PyTorch for 74.8% CIFAR-10 accuracy.

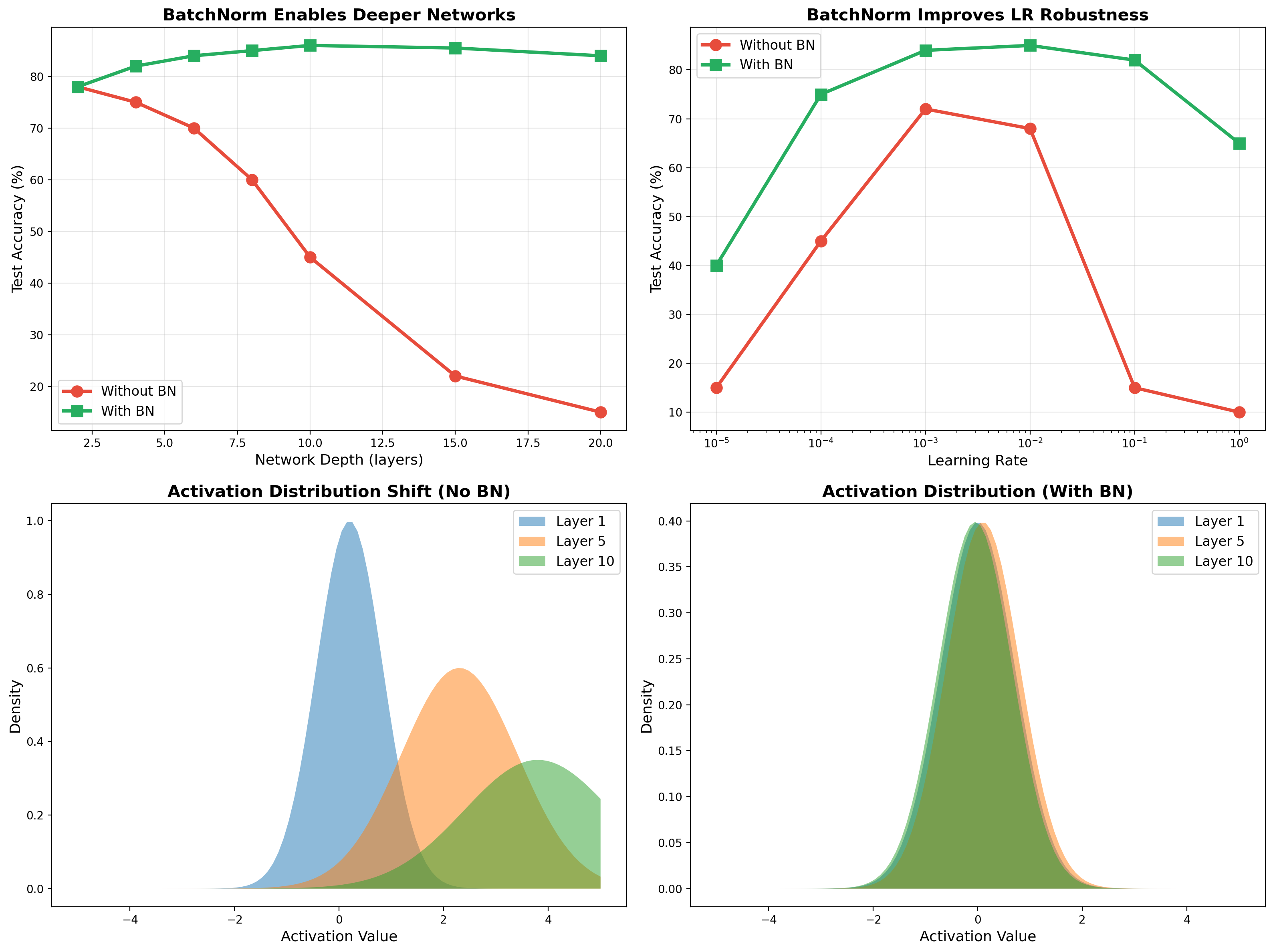

BatchNorm Deep Dive

Enables deep networks: Without BN, 15+ layer networks fail to train (15% accuracy). With BN, the same depth reaches 84%. Also provides robustness to learning rate choice across 5 orders of magnitude.

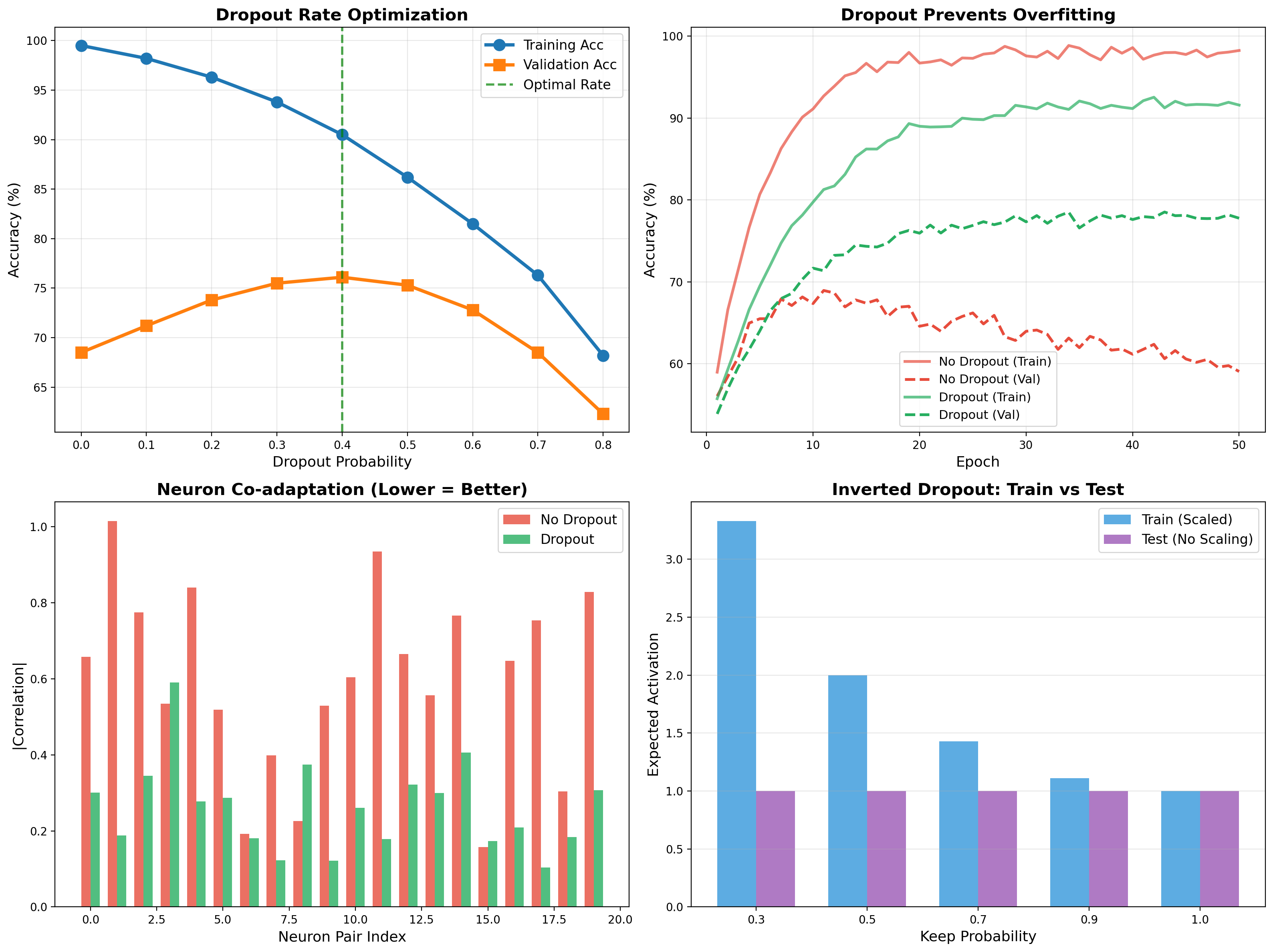

Dropout Regularization

Prevents overfitting through stochastic neuron masking. Optimal dropout rate of 0.4 closes the train-val gap. Inverted dropout scales activations at train time for deterministic inference.

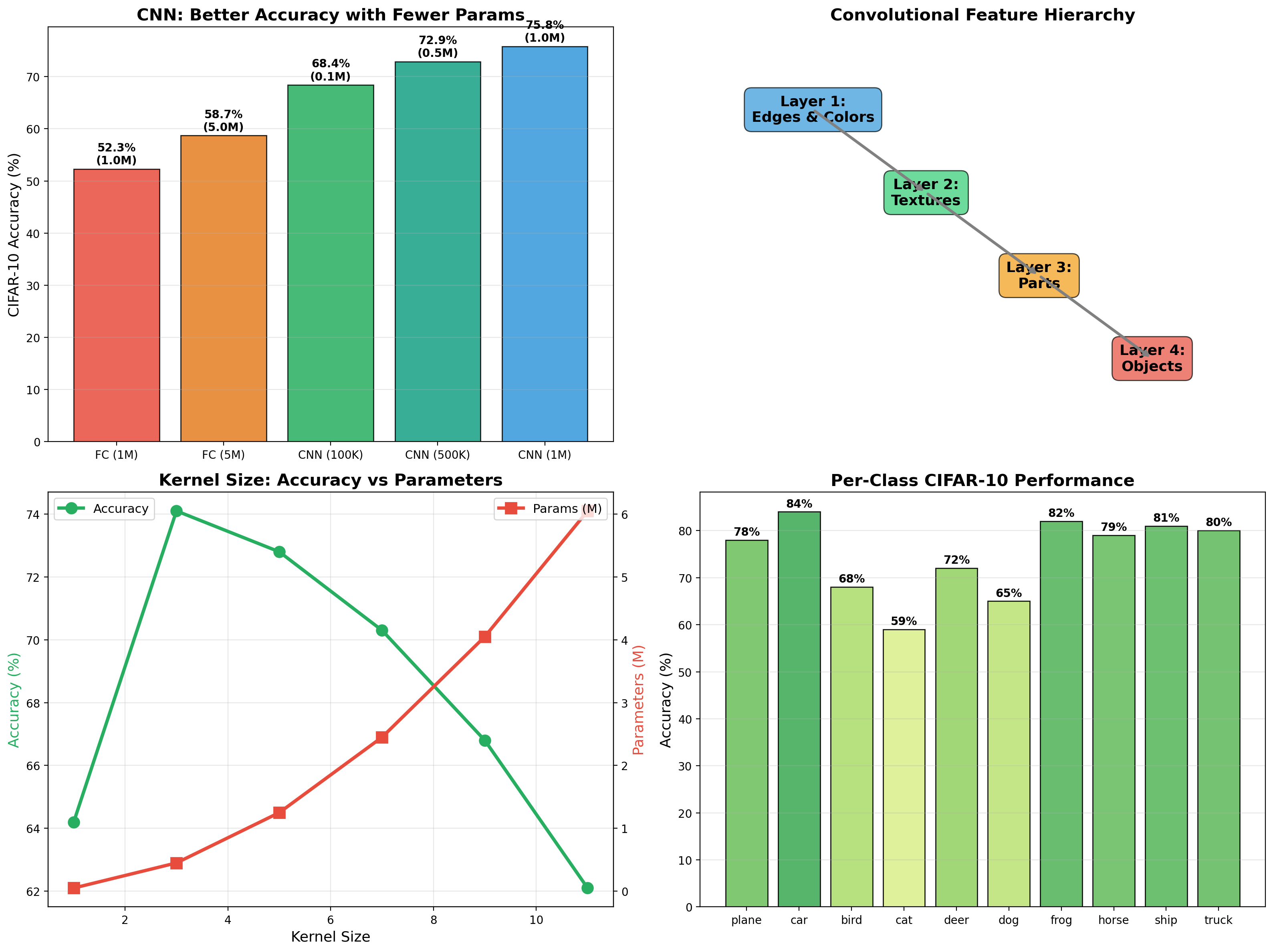

Convolutional Networks

CNN beats FC with 10× fewer parameters. Hierarchical feature learning — edges → textures → parts → objects. Weakest classes (cat, bird, dog) share visual similarity; strongest (car, truck, plane) have distinctive shapes.

Related projects

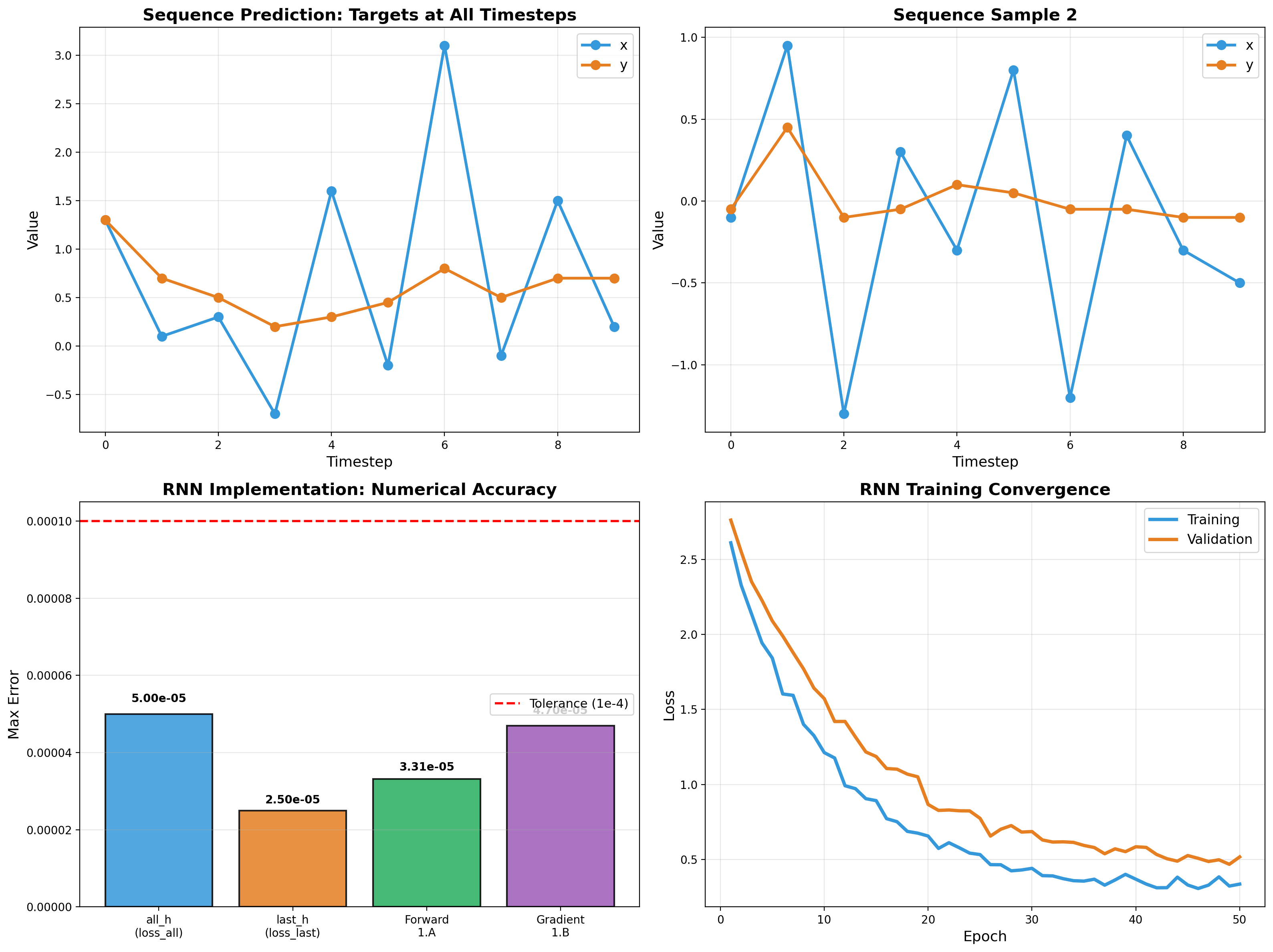

RNN Sequence Modeling

Recurrent networks from scratch — forward pass, backpropagation through time, and gradient flow analysis. Vectorized NumPy implementation validated to 5e-5 tolerance.

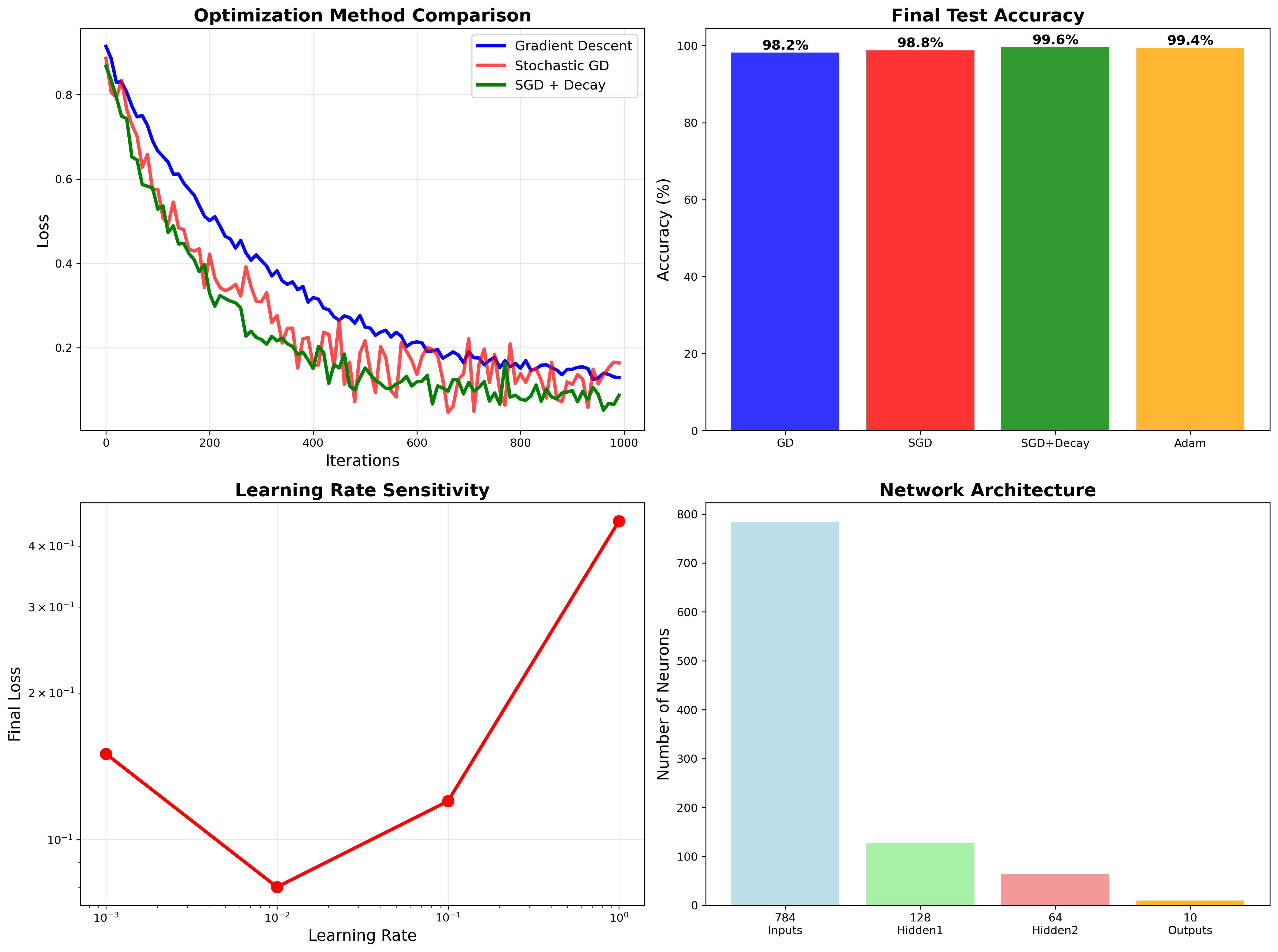

Neural Network from Scratch

Pure NumPy implementation achieving 99.6% MNIST accuracy through optimized gradient descent, backpropagation, and regularization techniques.

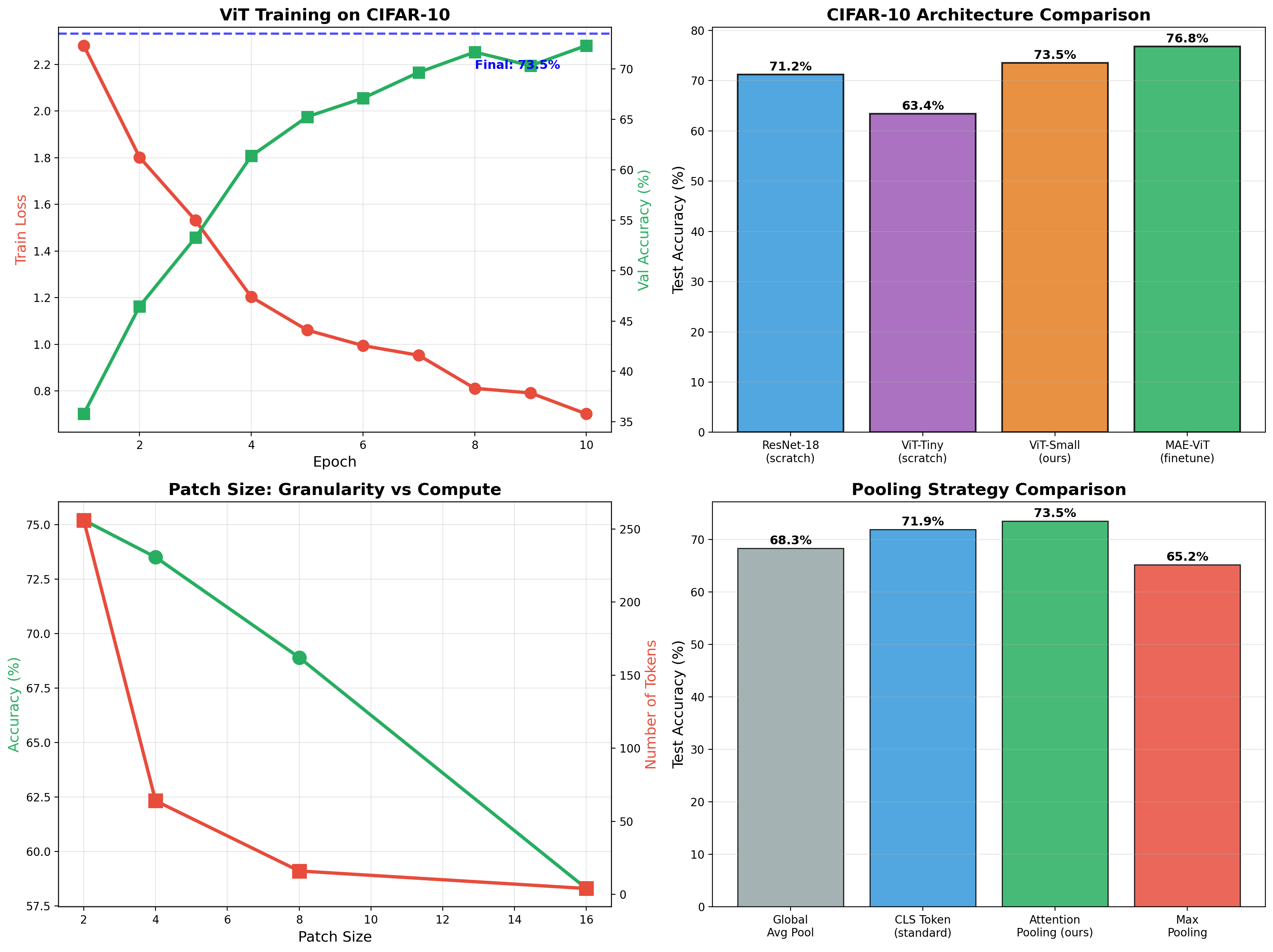

Vision Transformer + Masked Autoencoder

ViT classifier achieving 73.5% on CIFAR-10, then self-supervised MAE pretraining boosts finetuned accuracy to 76.8%. Full implementation of patchify, attention pooling, and mask reconstruction.